Confluent Certification Exams Overview

Getting started with Confluent's certification program

Real talk here.

If you're working with Apache Kafka or planning to, Confluent Certification Exams are basically the gold standard for proving you actually know what you're doing. Not just that you skimmed some documentation and can throw around technical terms at meetings. These aren't just paper credentials. They're industry-recognized validations that you can design, build, deploy, and manage real Kafka-based streaming applications and infrastructure without everything catching fire.

Confluent was founded by the original creators of Apache Kafka back in 2014, which honestly gives their certification program a legitimacy that random third-party certs just can't match. You're learning from the people who literally invented the technology, not some training company that decided Kafka was profitable in 2023. They launched their certification program a few years later, and it's changed a lot as Kafka itself has matured from this niche LinkedIn project into the backbone of real-time data streaming across basically every major industry you can think of.

Two tracks that cover different parts of the Kafka ecosystem

The certification space here is pretty straightforward. You've got two primary tracks: the Confluent Certified Developer for Apache Kafka (CCDAK) and the Confluent Certified Administrator for Apache Kafka (CCAAK). Not gonna lie, the naming is a mouthful. But the distinction makes sense once you understand who they're targeting.

CCDAK is for the folks building stuff. Software developers, application developers, data engineers, streaming engineers. If you're writing code that produces or consumes Kafka messages, working with schemas, building stream processing applications, or designing event-driven architectures, this is your exam. CCAAK targets the infrastructure side: system administrators, DevOps engineers, platform engineers, SREs. You're managing clusters, configuring security, monitoring performance, troubleshooting production issues at 2 AM when everything's on fire and Slack's blowing up.

Why these certifications actually matter right now

Here's the thing about 2026. Real-time data streaming isn't some future trend anymore. It's everywhere, it's happening right now, and companies that aren't adapting are getting left behind by competitors who figured this out three years ago. Financial services need millisecond-level transaction processing. E-commerce platforms track user behavior in real time. IoT deployments generate millions of events per second. The demand for people who really understand Kafka architecture and can implement it correctly is absolutely exploding.

Companies specifically list Confluent certifications in job postings now. They're not just nice-to-haves. When you're hiring for a senior platform engineer role and you've got 200 applications, certified candidates immediately stand out because at minimum they've demonstrated they understand topics like partition rebalancing, consumer group coordination, and exactly-once semantics beyond just buzzword level. Wait, actually that's not entirely true because some people still cram and pass without deep understanding, but generally the pattern holds. My cousin took CCDAK last year after working with Kafka for maybe three months total, passed on his second try, and still had to call me twice last month to explain why his consumer offsets kept resetting. So the cert proves something, just not everything.

Certification validity and keeping credentials current

Confluent certifications are valid for two years from your pass date, which honestly feels about right given how fast the streaming data space moves. You'll need to recertify to maintain your credential, though the renewal process has gotten more streamlined than the initial exam. Usually involves completing continuing education requirements or retaking an updated version of the exam. Policies through 2026 are expected to stay similar, though they're adding more modular recertification paths.

Taking the exams from anywhere

Both exams are online proctored.

That means you can take them from your home office in pajama pants as long as your upper half looks professional on camera and you've cleared your desk of everything the proctor might flag as suspicious. They're available worldwide with multiple language options, though I'd recommend taking it in whatever language you do your actual Kafka work in because technical terminology gets weird in translation.

Proving you actually passed

Once you pass, you get verified through Credly (or Accredible, depending on when you certified), which gives you a digital badge you can throw on LinkedIn, your resume, your email signature if you're that person. Employers can verify it's legit with a single click, which cuts down on the resume fraud that unfortunately happens with tech certifications.

How Confluent certs stack up against alternatives

There are other Kafka-related credentials floating around. Various training companies offer their own certificates, cloud providers have streaming certifications that touch on Kafka. But the thing is, Confluent's exams are different because they're vendor-neutral enough to cover open-source Apache Kafka while also testing on Confluent Platform and Confluent Cloud specifics. You're learning the actual product from the people who built it, not some third-party interpretation.

Experience matters more than memorization

Here's what separates people who pass on the first try from those who don't. Hands-on experience. You can memorize every configuration parameter in the Kafka documentation, study flashcards until your eyes bleed, watch every tutorial video on YouTube twice, but if you've never actually debugged why a consumer group is stuck in rebalancing hell or figured out why your exactly-once producer is throwing weird transaction errors, you're gonna struggle with the scenario-based questions. Both exams test practical application hard, not just book knowledge.

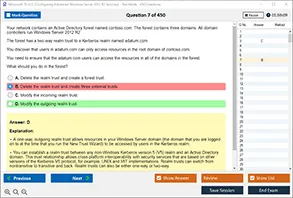

What to expect on exam day

Format-wise, you're looking at multiple choice questions, lots of scenario-based problems where they describe a situation and ask you to identify the best solution. Time limits vary by exam but generally you'll have 90 minutes for CCDAK and similar for CCAAK. Passing scores hover around 70%, though Confluent doesn't publish exact numbers. Questions pull from real-world situations. Designing a producer for reliability requirements, troubleshooting cluster performance issues, configuring security policies.

Prerequisites and experience recommendations

Technically there are no mandatory prerequisites.

You could theoretically sign up tomorrow and take either certification without anyone stopping you or checking your background. But Confluent recommends 6-12 months of hands-on Kafka experience for CCDAK and similar for CCAAK, and honestly that's not them being overly cautious. These exams assume you've worked with Confluent Platform, Confluent Cloud, or open-source Apache Kafka in actual production or production-like environments where real consequences happen when you configure something wrong.

Confluent Kafka Certification Path and Career Roadmap

what these certs actually prove

Hiring shorthand. That's it, honestly. Confluent Certification Exams tell a manager you've actually worked with Apache Kafka the way real teams do, not just spun up a broker once and slapped "experience" on your resume. The focus differs wildly, though. Different day-to-day realities.

Developer angle? You're building producers, consumers, Kafka Streams apps, wrestling with schemas and delivery guarantees while product people won't stop changing requirements. Admin angle means you're living in cluster land where upgrades, security, monitoring, quotas, partitions, and incidents become your normal Tuesday. Messy stuff. Real.

The Confluent Kafka certification path works like a ladder, I mean, if you squint: entry-level knowledge, then role proof, then "I can own the platform" credibility that makes people actually listen in architecture meetings. Confluent won't hand you an "expert" badge for free. Stacking certs with real work becomes that expert progression everyone pretends exists.

who should pursue them

Kafka on your team's roadmap? Already in production? You're a candidate.

Backend devs, SREs, data engineers, platform engineers. Even career changers wanting to pivot into data streaming without waiting years for "enough experience."

Universities and bootcamps are baking these in now, usually as capstone goals after a Kafka module, because it forces hands-on work and gives students something concrete for resumes beyond vague bullet points. Corporate training programs do the same, often with employer-sponsored vouchers and a "pass it this quarter" push tied to platform adoption timelines.

role-based paths that actually map to jobs

Clean split here. Developer Path versus Administrator Path, with a Hybrid Path for people who get dragged into everything (you know who you are).

Developer Path: the CCDAK exam targets app developers writing producers/consumers and Kafka Streams, where details matter like consumer group behavior and retry patterns, not just "send message, receive message" toy examples.

Administrator Path: the CCAAK exam is foundational for ops and infrastructure people running clusters and keeping them healthy through configs, ACLs, metrics, and failure modes that crop up at the worst times.

Hybrid Path: get both certs. Platform engineers and tech leads get taken way more seriously when they can discuss app patterns and explain why the cluster settings exist. Honestly it helps during incidents when everyone's tired and pointing fingers.

the order debate (CCAAK first vs CCDAK first)

Which Confluent certification should I take first: CCAAK or CCDAK? Depends on your daily work. The thing is, there's zero moral victory in picking the "harder" one first if it doesn't match what you actually do.

Arguments for CCAAK first: you learn infrastructure foundation before writing fancy stream processing code, and you stop making painful assumptions about partitions, replication, retention, quotas, and security that blow up later in prod when latency spikes and the on-call person starts muttering your name while checking logs.

Arguments for CCDAK first: if you're already writing code daily, CCDAK gives faster time-to-value because you can immediately fix how you produce, consume, serialize, handle errors, and test. Wait, actually the Confluent certification career impact shows up in your next PR review way before you ever touch a broker config file.

roadmap by experience level

Beginner (0 to 1 years): start with foundational Kafka knowledge before attempting either certification, seriously. Set up a local cluster, produce/consume messages, learn partitions and offsets, then add Schema Registry basics. Small wins matter. No rush here.

Intermediate (1 to 3 years): choose based on current responsibilities, not aspirations. Building apps daily? Go CCDAK (Confluent Certified Developer for Apache Kafka Certification Examination). On-call for clusters or working in SRE? Go CCAAK (Confluent Certified Administrator for Apache Kafka Certification Examination). Half-and-half? Pick whichever side hurts more at work right now.

Advanced (3+ years): dual certification is the move for leadership roles, platform ownership, or streaming architecture work. It also helps when you're mentoring others, because you can explain both the "why" and the "how" without hand-waving through uncomfortable questions.

Career changers: don't start with exam panic, I mean it. Build a small streaming project first, like CDC into Kafka then a consumer writing to a datastore, and document it properly. Then pick a path that makes sense. Most career changers do better starting admin-first if they're coming from IT ops, or developer-first from backend engineering backgrounds.

CCDAK details that matter

Real talk. The CCDAK exam, aka Confluent Certified Developer for Apache Kafka, targets Kafka developer certification skills: producers, consumers, delivery semantics, serialization, Schema Registry usage, Kafka Streams concepts, and reliability patterns. You're expected to know what happens when things fail. Not theoretical fluff, but practical survival skills.

Confluent Kafka study resources for CCDAK that actually help: build a sandbox, write a producer with idempotence enabled, write consumers with manual commits, add retries and DLQ behavior, and test rebalances by scaling instances up and down until it feels natural. Then read client configs until it stops feeling like alphabet soup. For the official page and specifics, see CCDAK exam page.

Confluent exam difficulty ranking wise, CCDAK feels medium for strong devs, but it gets spicy if you've never debugged consumer lag or dealt with schema evolution under pressure during an actual outage. Expect 2 to 4 weeks prep if you're already coding Kafka weekly, more like 6 to 8 if you're rusty or switching contexts.

Quick tangent: I've watched people cram for this in a weekend and then completely bomb the consumer rebalance questions. Don't be that person. The exam committee clearly expects you to have seen what a rolling deployment does to your consumer groups, not just read about it in docs. Live fire matters here.

CCAAK details that matter

The CCAAK exam, aka Confluent Certified Administrator for Apache Kafka, is Kafka administrator certification territory: cluster architecture, replication, ISR behavior, security (ACLs, auth), monitoring, tuning, operations, and troubleshooting. You need to think like the person who gets paged at 2 a.m. because brokers are flapping and producers are timing out while everyone panics.

Study plan: run a multi-broker setup, break it on purpose (honestly, this is the fun part), practice diagnosing under-replicated partitions, check controller behavior, tune retention policies, and get comfortable with security configs that make sense. Also practice reading metrics. Lots of them, until patterns emerge. The official reference point is the CCAAK exam page.

Difficulty ranking: for pure ops folks, CCAAK feels fair and reasonable. For developers who rarely touch infra, it can feel tougher than CCDAK because the surface area is wide and the failure modes are weird in ways that don't match application-level thinking.

CCDAK vs CCAAK in real job terms

What are the main differences between CCDAK and CCAAK job roles? CCDAK is about building and maintaining streaming apps that deliver features. CCAAK is about running the streaming platform that keeps everything alive. CCDAK vs CCAAK is basically "feature delivery" versus "platform reliability," though good teams blur that line constantly.

portfolio stacking and continuing education

Do Confluent certifications increase salary and career opportunities? Usually yes, but the Confluent certification salary bump depends heavily on region and role context. The bigger win is credibility plus mobility. I've seen backend devs pivot into streaming architecture and platform engineering just by pairing CCDAK with real project stories and a cloud cert that makes recruiters happy.

Good combos: AWS/Azure/GCP certs for managed Kafka and networking basics, data engineering certs for pipelines, DevOps certs for CI/CD and observability. That's a certification portfolio that makes sense on paper and in interviews, not just resume decoration.

Renewal strategy and continuing education matter too. The thing is, Kafka changes constantly and Confluent features evolve faster than docs update. Stay current by following release notes. Join Kafka meetups. Go to conferences when you can. Contribute fixes or docs to open-source if that's your thing. Specialize next: Kafka Connect, ksqlDB, Schema Registry patterns. Those are the skills that turn "passed an exam" into "can lead the project" credibility.

CCDAK - Confluent Certified Developer for Apache Kafka Certification Examination

What you're actually getting into with CCDAK

The CCDAK exam? It's where things get real for Kafka development, honestly. We're talking 60 multiple-choice questions crammed into 90 minutes, which sounds generous until you realize some questions have these sprawling scenario descriptions that eat up your time like crazy. Way more than you'd expect if you're used to quick-fire quiz formats where each prompt stays under two lines. You need roughly 70% to pass, though Confluent keeps the exact number close to their chest.

Look, if you're a Java developer, Python developer, or working with Scala, this is your certification. Polyglot engineers love this one too because it's less about language specifics and more about understanding how Kafka clients behave regardless of your stack. I mean, you still need to know the API patterns, but it's not like they're asking you to write actual code during the exam.

Registration is straightforward. Kinda bureaucratic though. Create your Confluent account first. Then schedule through either PSI or Kryterion. Your choice, both work fine. Exam fee sits around $150 USD, which honestly isn't terrible compared to cloud certs that charge double.

Remote proctoring setup (it's stricter than you think)

Not gonna lie, the proctoring requirements catch people off guard in ways that seem almost petty until you're sitting there sweating because your desk has a coffee mug they might flag as suspicious. You need a webcam that actually works, stable internet that won't drop mid-exam, and a completely clear desk. They're serious about the clean workspace thing. I've heard stories of people getting flagged for having a water bottle in view.

Photo ID verification happens before you start, and someone's watching your screen the entire time. Also your face, which feels intrusive but that's the deal. No second monitors allowed either, which trips up developers used to multi-screen setups. My colleague once spent fifteen minutes arguing with the proctor about whether his lamp counted as "suspicious equipment" before they finally let him proceed. Sometimes the human element in these remote setups creates more problems than the actual exam.

Application design makes up 28% of your exam day

This domain covers event-driven application architecture and choosing the right Kafka clients for your use case. Sounds basic but gets nuanced fast when you're evaluating trade-offs between different client libraries that all claim better performance. The producer design patterns section gets deep. You'll face questions about synchronous versus asynchronous sends, and when each makes sense. Batching strategies matter here. Idempotence configuration shows up constantly because it's core to exactly-once semantics, which everyone claims they need but half don't actually implement correctly.

Consumer design? That's where things get interesting. Consumer groups seem simple until you're dealing with partition assignment strategies and trying to figure out why your rebalancing takes forever. Offset management questions are tricky. There's manual commits, automatic commits, and then the whole mess of what happens during failures.

Topic design best practices sound boring but this section determines if you actually understand Kafka operationally. How many partitions? Depends on throughput requirements, consumer parallelism needs, and honestly your tolerance for operational complexity. Replication factors and retention policies round out this domain.

Development domain is 30% and it's hands-on focused

Producer API implementation questions assume you know key serialization and value serialization inside out, like you've debugged serialization mismatches at 2 AM when your pipeline suddenly stops processing events and you're frantically checking schema registries trying to figure out what changed. Custom partitioners show up in scenario questions where default partitioning doesn't cut it. Interceptors are tested but less frequently. They're more niche in real deployments.

The Consumer API stuff? Brutal if you haven't actually built consumers. Subscription methods vary. Polling patterns need to match your processing requirements. Commit strategies determine your delivery guarantees. Rebalancing handling separates developers who've debugged production issues from those who haven't.

Error handling and retry logic questions are where experience shows. The exam loves asking about idempotent retries, dead letter queues, and how to handle deserialization failures without blocking your entire consumer.

Performance optimization covers compression types (which actually impact CPU usage significantly), batching configurations that balance latency versus throughput, and buffer management that most developers just leave at defaults.

Deployment and testing takes 20% but feels harder

Integration testing with embedded Kafka or test containers appears in scenario questions about CI/CD pipelines, which honestly reflects how modern teams actually work since nobody's manually deploying Kafka apps anymore without some automation layer. Performance testing and benchmarking questions ask about realistic load simulation. Harder than it sounds when you're trying to model production traffic patterns.

Monitoring application metrics? Critical stuff. Producer metrics tell you about send failures and latency. Consumer lag is the metric everyone watches but interpreting it correctly requires understanding partition assignment. Throughput measurements need context about compression and serialization overhead.

Kafka Streams and ksqlDB round out the exam at 22%

The Kafka Streams API basics section covers KStream for event streams, KTable for changelog streams, and GlobalKTable for broadcast state. The thing is, GlobalKTable trips people up because it behaves differently than regular KTables regarding replication and that catches you if you're not careful. Stateless transformations like map, filter, and flatMap are straightforward. Stateful operations get complex fast. Aggregations require understanding state stores, joins need co-partitioning, and windowing introduces time semantics that confuse people.

ksqlDB basics are tested. Not deeply though. Creating streams and tables, writing basic queries. It's enough to be dangerous but not enough to build production stream processing.

Schema Registry integration is everywhere

Avro dominates real deployments, but the exam covers JSON Schema and Protobuf too, which makes sense given how many organizations have legacy systems spitting out JSON that they can't just rip out overnight even if Avro would be technically better. Schema evolution strategies matter because you'll break production if you don't understand backward compatibility versus forward compatibility versus full compatibility. Working with Schema Registry APIs means knowing how to configure producers and consumers to automatically register and retrieve schemas.

Security configurations from the developer perspective

Configuring SSL/TLS, SASL authentication, and understanding ACLs from your application code. This isn't deep security architecture (that's more CCAAK territory) but you need to know how to connect securely.

Preparation timeline and difficulty reality check

CCDAK sits at moderate to challenging difficulty, honestly depends on your background. If you've got 6-12 months of actual Kafka development work, you're in decent shape. Study timeline recommendations vary wildly. Experienced developers can prep in 4-8 weeks hitting it hard, but if Kafka is new to you, budget 8-12 weeks minimum. The exam assumes hands-on experience that you can't fake by memorizing documentation.

Common pitfalls? Overthinking simple questions. Not reading scenarios carefully enough. Some questions have multiple "correct" answers where you need to pick the best one for the specific context described.

Post-certification, you're looking at Kafka Developer roles, Streaming Engineer positions, and Data Platform Developer opportunities that specifically call out Confluent certification in job postings.

CCAAK - Confluent Certified Administrator for Apache Kafka Certification Examination

where this exam fits in Confluent Certification Exams

If you're reading about Confluent Certification Exams and your brain goes straight to brokers, disks, TLS, and "why is the ISR shrinking again", the CCAAK's your lane.

CCAAK's the Confluent Certified Administrator for Apache Kafka certification, and honestly it's way more ops-heavy than the developer track. The point's proving you can run Kafka as a service, not just write producers and consumers. If you're also comparing options, keep the developer one bookmarked too: CCDAK (Confluent Certified Developer for Apache Kafka Certification Examination) and the admin one here: CCAAK (Confluent Certified Administrator for Apache Kafka Certification Examination).

what the CCAAK exam is really testing

The exam code's CCAAK, officially "Confluent Certified Administrator for Apache Kafka Certification Examination". The admin focus is obvious once you look at the domains: cluster management, security, monitoring and operations, and then troubleshooting that expects you to think like an on-call SRE at 2 a.m. Not theory. Operational judgment.

Look, this's aimed at Linux administrators, Kubernetes operators, cloud infrastructure engineers, and SREs. If your day job includes systemd units, JVM heap arguments, storage classes, ingress policies, or arguing about whether to run Kafka on Kubernetes, you're the target audience. Data engineers can pass it too, but the thing is the exam assumes you're comfortable with infrastructure knobs and failure modes, not just Kafka APIs.

format, scoring, and scheduling details

The CCAAK exam format's straightforward: 60 multiple-choice questions, 90 minutes, and a passing score around 70% (Confluent doesn't always shout the exact number, but that's the working expectation people report).

Registration's the same deal as the developer exam. It uses the identical platform to CCDAK with the same remote proctoring requirements, so expect the usual: clean desk, stable internet, webcam rules, ID check, and the proctor who'll absolutely ask you to move your camera if you breathe wrong. If you already scheduled CCDAK, the flow'll feel familiar. If you haven't, practice your setup once before exam day. Seriously.

what you have to know: the four core domains

Here's the breakdown the exam basically revolves around. Percentages matter because time matters.

- Kafka Cluster Management (35%): the biggest chunk, and it shows.

- Security (20%): lots of config details, plus "what would you do" scenarios.

- Monitoring and Operations (25%): metrics, tooling, and staying ahead of incidents.

- Troubleshooting (20%): the messy stuff, where Kafka gets real.

cluster management (35%) is the make-or-break section

This domain's about installing, configuring, and maintaining Kafka clusters, plus understanding how your architecture choices change operations. Single datacenter setups are simpler, multi-datacenter introduces replication and failure domains, and cloud-native deployments add another layer of "your storage isn't actually a disk".

Broker configuration fundamentals show up a lot. Think server.properties parameters, JVM tuning, and operating system optimization. I mean, you don't need to memorize every single config key, but you do need to know what impacts throughput vs latency, what influences log retention and segment sizing, and how thread counts and network buffers can become bottlenecks when traffic spikes. Honestly the kind of stuff that bites you during midnight pages when you're trying to figure out why producers are suddenly timing out across three availability zones and your Slack's blowing up.

ZooKeeper's still fair game for legacy deployments, but you also need a working understanding of KRaft mode. Not just "it exists", but what changes operationally, what components move where, and what that means for upgrades and recovery. The exam isn't trying to trap you, it's checking whether you can live in mixed reality where some clusters are older and some are modern.

Capacity planning's another big one. Disk sizing, network bandwidth calculations, partition limits. Short sentences here. Partitions cost memory. Replication costs disk. Cross-AZ traffic costs money.

Upgrades matter too: rolling upgrades, version compatibility, and what a downgrade scenario looks like when you realize a client library or broker version mismatch's breaking production, and you need a safe path back without turning your cluster into a science project.

security (20%) goes beyond "turn on SSL"

Security's where people lose points because they treat it like a checklist. The exam wants you to understand the moving parts and tradeoffs.

Authentication mechanisms you should know: SASL/PLAIN, SASL/SCRAM, SASL/GSSAPI (Kerberos), and mTLS. Expect questions that mix broker config and client implications, like what breaks when you rotate certs, or how you separate internal broker-to-broker auth from external client auth.

Authorization's ACL-heavy. You need comfort with principal types, resource patterns (literal vs prefixed), and operation permissions. Also, read carefully. One word changes the answer.

Encryption in transit includes SSL/TLS for broker-to-broker and client-to-broker, and you should understand what config lives where. Wait, I'm mixing things up. Encryption at rest shows up as strategy: disk encryption approaches and key management realities. Add audit logging and compliance monitoring on top, because in real orgs security teams want evidence, not vibes.

monitoring, operations, and troubleshooting are where the exam gets spicy

Monitoring and Operations (25%) hits key Kafka metrics like under-replicated partitions, ISR shrink rate, and request handler idle percentage. You're expected to interpret, not just define. JMX metrics collection comes up, because Kafka still lives there, and you should know how it gets scraped and visualized.

Tooling matters: Prometheus, Grafana, Datadog, Confluent Control Center. You don't need to be married to one, but you should understand what each's good for. Logs also matter. Broker logs, controller logs, and the habit of correlating them with metric spikes. Fragments. Patterns. Root cause.

Troubleshooting (20%)'s classic Kafka pain: broker crashes, disk failures, network partitions. Leadership issues and preferred replica election. Consumer group problems like rebalancing storms, lag accumulation, stuck consumers. Producer errors too, including timeouts, buffer exhaustion, and metadata refresh problems that look like app bugs but're actually cluster symptoms.

Data loss scenarios get attention, and so does prevention. Disaster recovery procedures come up as well: backup strategies, cluster migration, topic recreation, and knowing what you can and can't recover after a bad day.

Also bundled into the admin skillset: topic and partition management (create topics, modify configs, partition reassignment), dynamic config updates without restart, quotas (producer, consumer, request), plus ecosystem work like Kafka Connect cluster ops, connector monitoring, and Schema Registry administration. I once spent an entire afternoon debugging why Schema Registry health checks were failing only to discover someone had quietly changed the Kafka ACLs and nobody thought to mention it. That's the kind of thing you'll need instinct for.

difficulty, prep time, and how to practice

The Confluent exam difficulty ranking most people report puts CCAAK harder than CCDAK. Broader scope. More "real ops" assumptions. Less room for guessing.

Recommended hands-on experience's 6 to 18 months of Kafka cluster administration. Study timeline: 6 to 10 weeks for experienced sysadmins, 10 to 14 weeks if Kafka ops's new to you. Lab it. Docker's fine for basics, Kubernetes's better if that's your world, and a small cloud setup teaches you the stuff that hurts, like storage performance and network boundaries.

The common challenge's scenario-based questions that need deep operational knowledge. Not trivia. Cause and effect across configs, metrics, and failure modes.

what you get after passing

This's one of the Confluent Kafka certification path credentials that hiring managers actually map to responsibilities. Post-cert, you're more credible for Kafka Administrator, Platform Engineer, and Infrastructure Architect roles, and yes, the Confluent certification career impact can show up in compensation discussions, especially if you're the person who can keep streaming platforms stable.

If you want the official page and a clean starting point, use the CCAAK exam page here: CCAAK (Confluent Certified Administrator for Apache Kafka Certification Examination).

CCDAK vs CCAAK - Full Comparison and Selection Guide

What your day actually looks like matters more than you think

Here's the thing. I've watched too many folks grab the wrong Confluent certification 'cause they chased what sounded impressive instead of matching their actual work. The CCDAK (Confluent Certified Developer for Apache Kafka) and CCAAK (Confluent Certified Administrator for Apache Kafka) exams? They test completely different skill sets, and picking wrong means you'll burn months studying material you'll never touch in your real job. Which honestly just sucks.

CCDAK folks spend days writing application code. We're talking implementing business logic that processes streaming data, designing event schemas that won't explode when requirements inevitably change next quarter, optimizing application performance 'cause that batch job's taking forever and your manager's breathing down your neck. Your IDE? That's home.

CCAAK people?

Different universe entirely.

They're provisioning clusters, implementing security policies that actually function (not just checkboxes), monitoring infrastructure at 3am when something breaks. And it always breaks. They do capacity planning so the cluster doesn't collapse during Black Friday, responding to incidents when developers panic-message "Kafka is down." Spoiler: it's never down, it's always their code.

The technical depth you need is wildly different

CCDAK requires serious programming chops. No way around it. Java and Python dominate, though Go's becoming more common in the wild. You've gotta know the producer and consumer APIs inside out, understand stream processing libraries like Kafka Streams, work with testing frameworks 'cause nobody wants untested streaming code hitting production. I mean, you're building applications that happen to use Kafka as their nervous system.

CCAAK? All infrastructure management. Linux systems administration isn't optional here, it's foundational. Networking knowledge matters 'cause you need to debug why that broker can't communicate with ZooKeeper. Security protocols, distributed systems concepts, automation tools like Ansible or Terraform become your daily companions. You're keeping the platform running so developers can actually do their thing without everything catching fire.

Starting point assumptions you better meet

Not gonna sugarcoat it. Jumping into CCDAK without software development experience is brutal and frankly, kinda masochistic. You need that foundation of building applications, understanding distributed systems at least conceptually, basic Kafka concepts like what a topic actually represents. Never written code professionally? This exam will absolutely hurt.

CCAAK assumes you've done systems administration work before. Period. Networking knowledge is key. Like, really key. Experience with production infrastructure where things break spectacularly and you're the one fixing them at odd hours. If you've never SSHed into a Linux box or debugged why two servers refuse to communicate, honestly, start there first before even thinking about this cert.

Where these exams overlap (and where they diverge hard)

Both certifications cover Kafka architecture fundamentals 'cause you can't perform either role without understanding how Kafka actually works under the hood. Topic concepts. Partitioning mechanics. Replication strategies. Basic security stuff. That's common ground where everyone meets.

CCDAK gets specific about client APIs, diving deep into Kafka Streams for stateful processing, schema management from the application perspective using formats like Avro and Schema Registry integration. You're constantly thinking about how your code interacts with Kafka's APIs and what happens when messages flow through your application logic.

CCAAK goes hard on broker configuration. There are SO many settings, it's honestly overwhelming at first. Cluster operations like adding brokers or rebalancing partitions without downtime, advanced security implementation beyond just "turn on SSL and pray," monitoring infrastructure with metrics that actually matter versus vanity numbers. You're thinking about keeping Kafka healthy 24/7 while developers complain about latency.

I knew a guy who tried cramming for CCAAK in two weeks because he figured "infrastructure is simpler than code." He failed twice. Turns out configuration tuning alone has more depth than most people expect, and troubleshooting requires instincts you only build through actual operational experience. Third time he passed, but only after spending six months actually running a test cluster and breaking things intentionally.

Difficulty is real but different for each

The CCAAK exam challenges you with breadth of operational knowledge that's really intimidating. Troubleshooting complex scenarios where multiple things are simultaneously wrong and cascading. Security implementation details that go way beyond the documentation examples everyone reads. One question might jump from networking to monitoring to security without warning. Keeps you on your toes.

CCDAK hits you with depth on the Streams API, and that thing has layers upon layers upon layers. Schema evolution scenarios where you've gotta figure out compatibility modes under pressure. Performance optimization questions requiring understanding both your code AND Kafka internals, which honestly trips people up constantly.

Pass rate considerations?

Neither Confluent publishes official numbers (annoying, I know), but community feedback suggests they're comparably difficult, just in completely different dimensions. Maybe 60-70% pass rate if I had to guess based on forum chatter and what people share online, though that's unofficial speculation.

Time investment reality check

CCDAK needs 40-80 hours if you're already developing with Kafka regularly. Complete beginners? Plan for 80-120 hours minimum 'cause you're learning both Kafka fundamentals and the development patterns simultaneously. Which is like learning two languages at once.

CCAAK requires 50-90 hours for experienced sysadmins who know Linux cold and have battle scars from production incidents. New to Kafka administration? You're looking at 90-150 hours 'cause there's just so much operational knowledge to absorb. Configuration tuning. Monitoring strategies. Security implementations. Disaster recovery procedures.

Career trajectory alignment is everything

Choose CCDAK if you're planning to work as an application developer building streaming pipelines that process real-time events, a data engineer focusing on real-time data processing architectures, or a backend engineer where Kafka's embedded in your stack. Tech companies, startups, financial services, e-commerce platforms all value this certification and actively recruit for it.

Choose CCAAK if you're pursuing DevOps roles where you own the platform's reliability. Platform engineering positions. SRE career paths where you're oncall for infrastructure. Infrastructure architecture roles. Enterprises with large-scale Kafka deployments and cloud service providers value this heavily 'cause they need people who won't panic when things break.

Salary impact varies by market

CCDAK has stronger impact in software engineering and data engineering markets where streaming expertise is rare. You're competing for roles where streaming expertise commands premium salaries. Typically $120K-180K depending on location and experience level, sometimes higher in tech hubs.

CCAAK carries higher value in infrastructure-focused organizations that run massive platforms. Platform teams at big companies. Dedicated Kafka operations roles. SRE positions. Similar salary range but different job market dynamics and honestly, different stress profiles.

Going for both? Here's the strategy

Holding both certifications gives you full expertise that makes you ridiculously valuable for leadership positions, better consulting opportunities where clients need full-stack Kafka knowledge, and honestly? You understand the full picture of how Kafka works in production. Not just your slice of it.

Recommended sequence depends on your current role but generally tackle your primary job function first for quick wins. Developer? CCDAK then CCAAK. Admin? Reverse it. Time investment for both runs 6-12 months with proper planning and consistent study habits, maybe longer if you're juggling demanding work.

Startups often favor CCDAK 'cause they need versatile engineers who wear multiple hats. Enterprises frequently require CCAAK for dedicated platform teams. Know your target employer's culture and priorities.

How to actually decide

Real talk time. Ask yourself: do I write code daily or manage infrastructure? Where do I really want to be in two years, not where someone told you to aim? What technical work energizes me versus completely drains me? What does my learning style prefer, hands-on coding or systems troubleshooting?

Try this experiment: spend 2-3 weeks studying topics from each exam's syllabus. Which material feels natural versus forced and awkward? That's your answer right there. Your gut knows even if your brain's overthinking it.

Career Impact and Salary Expectations for Confluent Certifications

why these certs move your career

Confluent Certification Exams? They're legit signal certs in streaming. Hiring managers actually know what CCDAK and CCAAK map to, and honestly, that matters when your resume's buried under a mountain of vague "Kafka exposure" bullets.

Kafka's everywhere. But here's the thing: real Kafka skill? Rare as hell. Teams want folks who can explain consumer groups without the usual hand-waving nonsense, or who can keep a cluster stable while traffic's spiking and someone decides to rotate credentials at literally the worst possible time (I mean, why do they always do that?). That's why the Confluent Kafka certification path can speed up promotions, role changes, and pay bumps even if your current title doesn't scream "streaming" yet.

developer vs admin validation

CCDAK's the builder badge. The CCDAK (Confluent Certified Developer for Apache Kafka Certification Examination) lines up with producer and consumer behavior, delivery semantics, schemas, Kafka Streams basics, and the patterns you use to ship event-driven microservices without accidentally creating a distributed mess that'll haunt you at 3am.

CCAAK's the caretaker badge. The CCAAK (Confluent Certified Administrator for Apache Kafka Certification Examination) is about actually running the thing: cluster setup concepts, security, monitoring, ops, troubleshooting, and all that day-two stuff that absolutely wrecks careers when you ignore it.

Different muscles. Different interviews. Different headaches.

roles unlocked by CCDAK

A Kafka developer certification like CCDAK tends to unlock roles where your output's code and your "ops" responsibility is mostly correctness, reliability, and picking reasonable defaults that won't explode later.

Kafka Application Developer's the obvious one. You're building event-driven microservices, implementing streaming data pipelines, picking partition keys that won't completely torch ordering guarantees, and arguing (politely, hopefully) about idempotent producers and consumer retries. This role's where CCDAK knowledge shows up fast in interviews because people will absolutely grill you about reprocessing, schema evolution, and what you'd do when a downstream service can't keep up.

Data Engineer (Streaming Focus) is a close second. You design real-time data architectures, integrate Kafka with data warehouses and lakehouses, and you live in that awkward zone between "data modeling" and "distributed systems behavior" where one bad decision about topic design or retention turns into a month of painful backfills and broken dashboards. Been there, it sucks.

Backend Engineer (Event-Driven Systems) also fits, especially if you're modernizing legacy apps with event streaming patterns, carving monolith flows into topics, and building outbox patterns so your DB writes and event publishes stop disagreeing with each other. Streaming Platform Developer pops up at larger orgs where you build internal developer platforms around Kafka: templates, golden-path libraries, guardrails, opinionated tooling. Integration Engineer's another one, connecting diverse systems through Kafka-based event meshes, but I'm not gonna lie, it can get political fast because you end up negotiating contracts between teams more than actually writing code. Sometimes I think the hardest part of streaming architecture isn't the tech at all, it's convincing Product and Finance to agree on what an "order completed" event actually means.

roles unlocked by CCAAK

CCAAK tends to unlock infrastructure and operations paths where you own uptime, performance, and figuring out "why is latency weird at 2am" mysteries.

Kafka Cluster Administrator's the direct mapping. You manage production Kafka environments, handle upgrades, tune configs, manage ACLs, validate broker health, and you're the person everyone pings when consumer lag graphs start climbing and nobody knows whether it's the app or the cluster causing chaos.

Platform Engineer's the modern version of that, building self-service data streaming platforms for dev teams so they can request topics, schemas, connectors, and permissions without filing tickets for two weeks while their project deadline screams past. SRE's another natural landing spot. You maintain Kafka as critical infrastructure and implement observability that actually helps: meaningful SLOs, saturation signals, and alerting that doesn't page you for normal rebalances. Cloud Infrastructure Engineer shows up when the company runs Kafka on AWS, Azure, GCP, or hybrid, and needs someone who understands networking, IAM, scaling, and failure domains without just guessing and hoping. DevOps Engineer (Data Platform) overlaps, usually focusing on automating Kafka deployment, configuration, and operations through pipelines and IaC.

real-world career impact beyond salary

The Confluent certification career impact? It's honestly more than just a number on an offer letter.

Hiring signal strength's real. In competitive markets, CCDAK vs CCAAK on a resume tells reviewers what kind of Kafka problems you can likely handle, and it reduces the "maybe this person just read one blog post" doubt that kills applications.

Interview performance improves too. The exam forces you to name things precisely, so you stop saying vague stuff like "Kafka guarantees delivery" and start talking about what producers and consumers actually do, what you configured, and what trade-offs you accepted. That turns rambling answers into confident technical discussions that senior interviewers respect.

Project assignment opportunities also shift. Certified folks tend to get pulled onto high-visibility streaming initiatives, either because leadership wants a safer pair of hands or because the existing platform team needs someone who can ship without constant supervision. Internal mobility gets easier as well, especially transfers to data platform and architecture teams, because you can point to CCDAK or CCAAK exam prep as proof you're already doing the work mentally. Consulting opportunities are a sleeper benefit. Independent consultants use certs for client credibility when clients don't know how else to evaluate Kafka skill quickly.

salary expectations by role and region

Confluent certification salary ranges vary a lot, but here are 2026 US estimates that match what I keep seeing in job posts and recruiter pings.

CCDAK-certified Kafka Developer salaries: $95,000 to $145,000 USD. Entry-level certified developers (0-2 years Kafka) often land $95,000 to $115,000. Mid-level (2-5 years Kafka) tends to be $115,000 to $135,000. Senior folks (5+ years Kafka) commonly hit $135,000 to $165,000+ depending on scope and on-call expectations. That's the Confluent certification salary story on the dev side.

CCAAK-certified Kafka Administrator salaries: $100,000 to $155,000 USD. Entry-level certified administrators (0-2 years Kafka) are usually $100,000 to $120,000. Mid-level (2-5 years Kafka) lands around $120,000 to $140,000. Senior (5+ years Kafka) reaches $140,000 to $170,000+ when you're the person accountable for production stability. Wait, let me clarify: when you're the one getting paged at midnight because latency's spiking and executives are panicking.

Geography still matters. High-paying markets like the San Francisco Bay Area, New York City, Seattle, and Boston can push $120,000 to $180,000+ for strong candidates. Mid-tier markets like Austin, Denver, Chicago, and Atlanta often sit in the $90,000 to $140,000 range. Remote roles're more common now and typically pay 80 to 95% of top market rates. International markets vary, but Europe often runs €70,000 to €110,000, and Asia-Pacific depends heavily on country and company type.

Industry premiums're also a thing. Financial services pay extra for reliability and compliance. Tech companies compete hard and add equity. E-commerce and retail keep hiring for real-time inventory and customer data. Healthcare and life sciences're growing but compliance-heavy.

Dual certification's where it gets spicy. Holding both CCDAK and CCAAK often adds a 10 to 20% premium because you can build and run, and that also accelerates the leadership track toward architect and principal engineer roles since you can talk code, ops, and risk in the same meeting without sounding lost.

ROI and what to do next

Costs're pretty clear: exam fees're about $150 per exam, study materials can run $100 to $500, and the real price is your time, plus potential retakes if you rush. Still, if you're targeting streaming roles, the ROI can be strong because even one better offer, one internal transfer, or one high-impact project can pay the whole thing back fast.

If you're choosing where to start, use the role you want next. Then read the official pages for CCDAK and CCAAK, map the skills to your current gaps, and build your how to pass Confluent certification plan around hands-on practice, not just notes.

Conclusion

Getting your cert sorted

Look, I've walked through what these Confluent exams demand from you, and honestly? They're not the kind of tests where you can wing it the night before. The CCDAK wants you thinking like a developer who actually understands Kafka internals, not just someone who's read the documentation once. And CCAAK? That's all about keeping clusters running when things go sideways at 3am.

The good news?

You don't have to figure this out alone, I mean.

Practice exams make a massive difference here because Confluent's question style is pretty particular. They love scenario-based questions that test whether you actually know what happens when you change a configuration or when a broker goes down. Reading theory is one thing. Answering questions about partition reassignment under time pressure is completely different. If you're serious about passing, check out the practice resources at /vendor/confluent/ where you can work through realistic exam questions for both certifications. For CCDAK there's a focused set at /confluent-dumps/ccdak/ and CCAAK has its own at /confluent-dumps/ccaak/.

The thing about these certifications is they actually mean something in the market right now. Really valuable, not just resume filler. Companies running Kafka infrastructure need people who know what they're doing, not just folks who've watched a YouTube tutorial. Getting certified proves you understand the architecture, the trade-offs, the operational concerns that matter when you're dealing with real-time data streams at scale.

I've seen people spend six months "planning to study" and never book the exam. Don't be that person.

Here's what I'd do: pick which exam matches where you want your career to go. Developer path? CCDAK. Infrastructure and operations? CCAAK. Then block out serious study time, work through those practice exams until the question patterns feel familiar, and book your test date. Having that deadline locked in changes everything because suddenly you're not "planning to study eventually" but actually doing it.

These certs open doors to roles that pay well and work on really interesting problems. Not gonna lie, studying takes effort. But three months from now you could be explaining your Confluent certification in job interviews instead of still thinking about maybe getting started someday.