Hortonworks Certification Exams Overview

What you're actually getting into with these certifications

Okay, so here's the deal. Hortonworks used to be one of the big players in Hadoop distributions before Cloudera acquired them back in 2019, but get this: their certifications didn't just vanish into thin air. The Hortonworks Certification Exams still matter in 2026 because they validate something employers actually care about. Hands-on skills with the Apache Hadoop ecosystem. We're talking real problem-solving in live environments, not multiple-choice trivia that anyone could memorize over a weekend.

These aren't your typical vendor-specific tests. You know what I mean? Where you memorize proprietary features? Hortonworks built their exams around Apache open-source projects like Hadoop, Hive, Pig, and Spark, which means what you learn transfers across different platforms and distributions. You could work with AWS EMR tomorrow and Azure HDInsight next month. The core skills stay relevant.

Funny thing is, I spent three months prepping for Azure's data engineering cert before realizing I didn't understand distributed computing at all, just memorized service names.

Who actually needs these credentials

Big data developers? Obvious candidates. Data engineers too, honestly. If you're managing Hadoop clusters as an administrator or building analytics pipelines, these certifications speak your language. The market's changed since the merger, but enterprises running on-prem Hadoop installations didn't just rip everything out overnight. They need people who understand HDP architecture and can work with the entire ecosystem.

The Apache-Hadoop-Developer exam focuses on Pig and Hive development specifically, while HDPCD covers broader platform development skills. Different audiences, different use cases.

Performance-based testing changes everything

Here's what separates Hortonworks exams from AWS or Azure big data certifications: you actually have to DO the work. No, there's no clicking through scenarios like some gamified tutorial. You get dropped into a live cluster environment and solve real problems under time pressure. Write Pig scripts that actually run. Build Hive queries that process actual data. Debug broken pipelines.

Cloud vendor exams test whether you know their specific services. Their pricing models, their management consoles. Hortonworks tests whether you can build data solutions, period. Not gonna lie, it's harder but way more valuable for proving technical competence.

Technical reality check before you start

You need Linux fundamentals. Like, actually comfortable with command line, not just "I opened terminal once" comfortable. SQL knowledge? Non-negotiable because Hive is SQL-on-Hadoop. Some programming basics help. Whether that's Python or Java doesn't really matter. The thing is, the exams assume you've worked with distributed systems concepts before, so if you haven't, you're starting from scratch.

For prep environments? You'll want a decent machine that can run virtual clusters. 16GB RAM minimum, honestly more if you're running multi-node setups locally. I mean, you could technically get by with less, but why torture yourself? Software-wise, you're installing Hadoop distributions, configuring services, the whole deal.

The certification lifecycle in 2026

Validity periods run three years typically. Pretty standard. Recertification requirements shifted after the merger. Cloudera integrated some pathways but kept the performance-based format because they knew that's what made these credentials valuable. You can take exams remotely with proctoring software or book test center slots. Remote's more convenient but you need a clean workspace and stable internet.

Exam fees? Several hundred dollars depending on the certification level. Study materials range from free Apache documentation to paid training courses, so there's options. Preparation time depends. If you're already working with Hadoop daily, maybe 4-6 weeks of focused study. Coming in cold, double that.

Success rates aren't published officially. These exams have teeth. The hands-on format filters out people who just memorized dumps, which is honestly refreshing in the certification world. Enterprise Hadoop adoption strategies still lean on certified professionals because the skills map directly to production workloads. Market demand's shifted toward cloud, sure, but hybrid deployments keep Hadoop certification relevant.

Digital badges verify through Credly or similar platforms. Join certification holder networks. The community resources actually help with career connections beyond just exam prep.

Hortonworks Certification Paths for Big Data Professionals

what these certs actually prove

Look, Hortonworks Certification Exams are about proof, not vibes. Hands-on. Timed. You either can build and troubleshoot data pipelines on Hadoop, or you can't. There's no middle ground here, and that's exactly why employers still list them in 2026 job descriptions even with "cloud-first" plastered everywhere, especially when the stack includes Hive, Spark, Kafka, Ranger, or "HDP-like" managed services that basically mimic the old architecture.

The roadmap usually splits into developer, administrator, and data scientist tracks. Admin's cluster management and ops, which matters for context but is rarely the best first step if your day job's writing code or SQL. Data scientist sits kind of sideways: you still need dev fundamentals for data access, feature pipelines, and performance, then you layer ML on top. Different focus, same ecosystem.

developer track roadmap (from first exam to advanced)

The developer track's where most big data professionals should start. The core skills? Data ingestion, transformation, and query optimization, plus debugging when a job dies at 2 a.m. and the logs are.. I mean, not friendly.

A practical big data developer certification roadmap goes like this: Hadoop fundamentals (HDFS, YARN, MapReduce concepts) then Hive and Pig for batch transformations, then Spark for compute patterns, then platform specifics like security, governance, and tuning. If you're coming from an ETL specialist background, you'll recognize the flow immediately. It's basically the same mental model with different tools. If you're a software engineer, the distributed systems mental model's the speed bump.

Prereqs and experience level, realistically:

Entry tier: 3 to 6 months around SQL or scripting, plus basic Linux.

Mid tier: 6 to 18 months building pipelines, reading logs, tuning queries.

Advanced: you've shipped production jobs, you've broken prod once, and you learned from it. That last part? Matters way more than people admit.

Time investment estimates (study plus exam): entry 25 to 40 hours, mid 50 to 90, advanced 90+ depending on how rusty your Hadoop internals are, or if you've ever actually touched them.

where Apache-Hadoop-Developer fits

The Apache-Hadoop-Developer (Hadoop 2.0 Certification exam for Pig and Hive Developer) is the classic entry-to-mid signal. It maps to the "I can actually work with Hadoop 2.0 Pig and Hive developer certification tasks" level, not just talk about them at standup.

This's a good entry point decision: programming background vs SQL-centric experience. If you're SQL-heavy, you'll like Hive fast, then you learn Pig patterns for transformations and workflows. The thing is, Pig syntax feels weird at first but clicks once you stop fighting it. I watched a guy with ten years of Oracle experience spend two weeks cursing at Pig before something flipped and he started writing cleaner transformations than our resident Spark evangelist. Sometimes the friction's the point. If you're code-heavy, you still need to respect how data layout and partitions change everything, and why query optimization isn't optional.

Study resources that help: official Hive/Pig docs, small labs on sample datasets, and a Hadoop developer exam preparation guide that forces you to write queries under time pressure. Also, yes, people search "Hortonworks exam practice questions and dumps", but build muscle memory in a sandbox first.

Dumps don't teach debugging.

HDPCD as platform-specific validation

HDPCD (Hortonworks Data Platform Certified Developer) is more platform-specific, and I've got mixed feelings about that. Exam code HDPCD signals you can deliver on Hortonworks HDP developer certification expectations, not just generic Apache basics, which matters in enterprises that still run HDP-ish distributions, or migrated but kept the same operating model, security controls, and governance patterns because changing those takes forever and nobody wants to own that project.

If you're choosing between vendor-neutral Apache certifications and HDP-specific credentials, ask what your target employers run. Finance and telecom tend to care about controlled platforms and governance. They want that audit trail. Retail might care more about speed and cloud integration. Healthcare sits in the middle, with compliance driving tooling choices.

stacking, cross-certification, and 2026 reality

Hortonworks certification career impact's best when you stack. Pair Hadoop plus Spark plus a cloud provider credential (AWS, Azure, GCP) and you match how teams actually hire now: hybrid data platforms, managed services, and some legacy clusters that won't die no matter how many migrations get announced.

Role mapping helps:

Data engineer: Hadoop plus Spark plus cloud storage and IAM.

Analytics developer: Hive performance, modeling, BI-friendly datasets.

ETL specialist: ingestion tooling, scheduling, error handling, governance.

For migration paths, if you have legacy Hadoop certs, treat Apache-Hadoop-Developer as a refresh, then add HDPCD for "I can do this on your platform." Use a simple skill gap assessment: rate yourself 1 to 5 on ingestion, transformation, optimization, security, and ops, then pick the next exam that hits your weakest two, or the one your boss keeps mentioning. Quick framework. Very effective.

Hortonworks certification salary varies a lot by geography, and I mean a lot. US and Western Europe reward the cloud stack combo more. India and parts of APAC often value recognizable Hadoop credentials for services roles. Either way, the employer requirement in 2026's simple: show you can ship pipelines, tune queries, and keep data reliable.

Everything else? Decoration.

Apache-Hadoop-Developer: Hadoop 2.0 Certification Exam for Pig and Hive Developer

What this exam actually tests

The Apache-Hadoop-Developer certification zeroes in on Pig and Hive development within the Hadoop 2.0 ecosystem. It's completely performance-based, meaning you're not clicking through multiple choice questions. You write code and solve real problems in a live environment where everything either works or it doesn't.

The exam typically includes around 8-12 hands-on tasks that you need to complete within a 2-hour window. You'll need to score at least 70% to pass.

This isn't theoretical stuff. You're sitting at a terminal writing Pig Latin scripts and HiveQL queries to transform datasets, load files, and solve actual data processing challenges. The testing environment gives you access to the Hadoop cluster, documentation, and your scripts. You can't Google your way through it.

Who should take this thing

This exam targets developers who're already working with data processing pipelines. If you're writing ETL jobs, transforming raw data into something usable, or building data warehouses on Hadoop, this certification validates those skills. It's designed for people who know their way around a command line and understand basic programming concepts.

You can register through the official path at Apache-Hadoop-Developer, which walks you through the scheduling process and technical requirements. The technical setup is key. You need a stable internet connection and a compatible browser since you're connecting to a remote testing environment.

Core skills they're evaluating

Pig Latin scripting forms a massive chunk of this exam, like probably 40% or more of your total score depending on the task distribution. You need to know how to load data from various sources and apply transformations using operators like FILTER, GROUP, and JOIN. Understanding when to use UDFs instead of built-in functions matters here. Parameter substitution and macros come up too, especially in scenarios where you're building reusable pipeline components.

HiveQL is the other major pillar. You're expected to write queries that go beyond basic SELECT statements. Partitioning strategies, bucketing for performance, creating and managing tables with different storage formats all get tested. The exam will definitely throw Avro, Parquet, and ORC file formats at you, and you need to know when each makes sense.

I spent about three weeks just wrestling with file format conversions before I felt comfortable with them, which honestly felt excessive at the time but paid off during the actual exam.

Hadoop 2.0 architecture fundamentals

You can't just know Pig and Hive syntax. The exam assumes you understand how YARN manages resources, how HDFS stores data blocks, and how MapReduce jobs execute under the hood. This knowledge surfaces when you're debugging performance issues or understanding why a particular query's running slowly.

Join operations get tested heavily in both frameworks. Thing is, optimization matters more than just getting joins to work. You'll encounter scenarios requiring inner joins, left outer joins, and maybe even cross joins with proper optimization. Pig's COGROUP versus JOIN differences matter here, as do Hive's map-side joins versus reduce-side joins.

Advanced topics that separate pass from fail

Complex data types trip people up constantly. Working with arrays, maps, and structs in both Pig and Hive requires understanding schema definitions and nested field access patterns. File format conversion tasks appear frequently, like converting CSV to Parquet while preserving schema, or reading JSON into Hive tables with proper SerDe configuration.

Performance tuning shows up in almost every task. Nobody wants slow pipelines in production environments. You need to know combiner usage in Pig, parallel execution settings, and memory management configurations.

Integration scenarios might ask you to connect Pig or Hive with HBase tables or process streaming data sources.

Preparing without wasting time

Six to eight weeks works for most developers who already have basic Hadoop exposure. Set up the Hortonworks Sandbox or spin up a cluster on AWS or Azure for hands-on practice. The official Apache documentation for Pig and Hive should be your reference point. Get comfortable working through it since you'll have access during the exam.

Practice with realistic datasets. Download public data, create messy scenarios, and force yourself to solve them. Time yourself too. Task completion speed matters when you've got 12 problems and 120 minutes.

If you're comparing certification paths, the HDPCD exam covers broader Hadoop ecosystem tools, while Apache-Hadoop-Developer drills deep into Pig and Hive specifically. Choose based on your actual job responsibilities.

HDPCD: Hortonworks Data Platform Certified Developer Exam

what this exam actually is

HDPCD's the hands-on option inside Hortonworks Certification Exams. Honestly, it's for people writing actual apps and pipelines on Hortonworks Data Platform, not the folks who just wanna talk theory and call it a day. You're building stuff, debugging production meltdowns, and proving you can ship code that doesn't explode when it hits the cluster. Long hours. Real pressure.

Officially, the exam code you'll encounter tied to this one's HDPCD (Hortonworks Data Platform Certified Developer), and registration plus exam page details typically get routed through HDPCD (Hortonworks Data Platform Certified Developer), so if you're comparing Hortonworks certification paths and trying to figure out which one actually matters for your career trajectory and whether this'll move the needle on interviews, start there and work outward.

who should take it (and who shouldn't)

This one's aimed squarely at developers building on HDP services, writing code, wiring ingestion pipelines, and debugging why a job died at 2 a.m. when you're three coffees deep and the logs are gibberish. If your day job's mostly SQL and dashboards? You might still pass. But you'll feel that time pressure hard because the format's "do the work" and not "pick the right answer from five options where two are obviously wrong."

The thing is, this is also where the distinction from the Apache-Hadoop-Developer (Hadoop 2.0 Certification exam for Pig and Hive Developer) actually matters a ton. The Apache one's closer to pure Apache project skills like Pig and Hive fundamentals: command syntax, query optimization, that whole universe. HDPCD cares about HDP tooling and how it behaves together, with Ambari dashboards, Ranger policies, and the platform defaults you'll actually see in enterprise clusters where someone already made architectural choices you're inheriting.

I once watched a colleague bomb this exam because he spent three years on Cloudera's stack and assumed the platforms were basically identical. They're not. The small differences killed him.

format, timing, and pass rules

HDPCD's performance-based. Period.

You get hands-on scenarios inside a live HDP cluster environment where no fluff exists and you either make the task work correctly or you don't. There's no partial credit for "well, I almost had the right command." Duration's typically 2.5 hours, with around 10 to 12 tasks depending on which exam version you draw, and a minimum passing score hovering around 70%, though I mean, the passing criteria feels way less about "did you memorize commands" and way more about "can you finish enough tasks before the clock completely eats you alive."

Testing infrastructure varies wildly. Some candidates do remote proctoring from home, others trek to a test center, and the annoying part's you must verify your workstation specs, webcam rules, ID protocols, and network stability ahead of time, because losing connectivity mid-lab is the absolute fastest way to torch an attempt and waste your registration fee.

what you'll be doing in the cluster

Core domains are exactly what a Hortonworks HDP developer certification expects: HDFS operations, YARN application development basics, and data ingestion patterns that match real pipelines you'd build for a client who's breathing down your neck about delivery timelines. Expect MapReduce requirements too. Actual Java-based mapper and reducer development with proper input/output formats, not pseudocode explanations, and definitely not just "explain MapReduce in three bullet points."

Spark shows up as well. RDD operations, DataFrames, basic transformations where you're reading data, applying filters and aggregations, and writing it back correctly without accidentally blowing up partitions or creating ten thousand tiny files that make your ops team hate you.

Ingestion tools matter a lot: Sqoop for relational database imports and exports with incremental update patterns, Flume configuration for log aggregation from distributed sources, Kafka integration for real-time feeds that can't afford lag, and Oozie workflow development for scheduling and dependency control so jobs run in the right sequence. HBase design also comes up, with table design decisions and access patterns for NoSQL cases where row key design makes or breaks query performance. Plus Avro schema design and evolution so your data formats don't break catastrophically the moment someone adds a field upstream.

Security isn't ignored either. Kerberos concepts that trip up half the developers who've never touched it, Ranger policy basics for access control, Ambari familiarity for service health checks and config tweaks. Troubleshooting's constant, so log analysis, common HDP-specific misconfig issues, and performance tuning within HDP constraints all count toward your score, plus version-specific compatibility gotchas that'll absolutely wreck your day if you're not prepared.

prep that doesn't waste your time

Use Hortonworks official docs and tutorials as your base Hortonworks certification study resources, then practice extensively in an HDP Sandbox where you can break things without career consequences and rebuild them until muscle memory kicks in. Do it repeatedly. Seriously.

Project ideas that mirror task complexity work best: build an end-to-end ETL pipeline that lands raw logs via Flume, stages them in HDFS with proper directory structure, transforms with Spark using realistic business logic, writes curated data to a final location, and schedules the whole thing with Oozie so it runs nightly. Then add a data quality check step and an Avro conversion phase so you get super comfortable with format conversions and debugging "why did my schema fail" errors at 11 p.m. Keep a small code repo with reference implementations you can retype fast under pressure, because you absolutely won't have time to reinvent patterns or Google syntax during the exam when every minute's precious.

For Hortonworks exam practice questions and dumps, be really careful here. I mean, use practice platforms for timing drills and topic coverage gaps, but don't depend on memorized answers like it's a multiple-choice test, because HDPCD's task execution where the environment's slightly different every time and you need actual comprehension.

Recommended study duration's 8 to 10 weeks with consistent hands-on access, not just reading. Week 1-2: HDFS commands, YARN basics, MapReduce fundamentals. Week 3-4: Spark operations plus Sqoop transfers plus Avro schemas. Week 5-6: Flume agents, Kafka topics, Oozie coordinators. Week 7: HBase tables, Ranger policies, Kerberos authentication, Ambari navigation. Week 8: mock exams under time pressure, gap analysis where you bombed, and time allocation practice across different task types so you know when to bail and move on.

Pre-exam checklist: confirm environment requirements, tool familiarity, time zone coordination if you're remote, and a backup connectivity plan. Honestly, maybe a mobile hotspot if your home internet's flaky. Score reports usually break down performance by domain so you see exactly where you struggled, and certificate issuance is often a few business days with a digital credential you'll want to store somewhere sane for recruiters tracking Hortonworks certification career impact, Hortonworks certification salary conversations during negotiations, and your overall big data developer certification roadmap as the field keeps evolving.

Hortonworks Exam Difficulty Ranking and Comparison

Comparing difficulty between Hortonworks certification exams

Here's the thing. Not all Hortonworks certification exams are built the same, y'know? The Apache-Hadoop-Developer and HDPCD exams test completely different skill sets, and honestly, which one's gonna wreck you depends entirely on what you're already good at when you walk in.

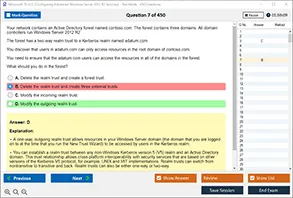

The main difficulty across both exams comes from hands-on performance requirements. You're not picking answers from a list. You're actually working in a live cluster environment, writing code, debugging issues, and producing results that either work or don't. There's no partial credit for "kind of right" when your Pig script fails to execute.

What makes the Apache-Hadoop-Developer exam challenging

Real talk? The Apache-Hadoop-Developer certification demands deep expertise in Pig and Hive specifically. I mean, really deep knowledge here. You've gotta know the details of Pig Latin syntax, understand how to optimize queries, and handle edge cases in data transformations that most people never encounter in regular work. If you've got a strong SQL background, the Hive sections might feel more natural, but Pig has its own learning curve. It catches people completely off guard.

Time pressure becomes brutal. When you're debugging a complex multi-step workflow and you've got limited hours to complete all tasks, if you spend 30 minutes stuck on one problem because you can't figure out why your JOIN isn't producing expected results, well, that eats into everything else. Suddenly you're scrambling.

HDPCD breadth versus depth tradeoff

The HDPCD exam takes a different approach entirely. Instead of going super deep on two tools, it tests your ability to work across multiple technologies in the Hadoop ecosystem. MapReduce programming, YARN configuration, data ingestion patterns. You need to context-switch between different tools quickly.

Candidates with programming backgrounds tend to perform better. Writing efficient map and reduce functions requires actual coding skills, not just query writing abilities. But then you also need to understand cluster architecture, which is a completely different skill set. Doesn't overlap much.

Environmental complexity adds another layer

Both exams throw you into unfamiliar cluster configurations, honestly. You're not working in your comfortable development environment with your preferred IDE and debugging tools. You get what you get, and troubleshooting time's limited. I've heard from people who lost significant time just figuring out file system permissions or, wait, locating input data directories.

This reminds me of a buddy who spent 20 minutes on exam day trying to remember the exact hdfs dfs command syntax for recursive directory listing because the cluster didn't have his usual aliases set up. Twenty minutes. On something he did every single day at work but with slightly different flags.

How prior experience shapes perceived difficulty

Someone coming from a traditional database administration background might find the Apache-Hadoop-Developer exam more approachable. Why? Because Hive feels somewhat familiar. Throw them into HDPCD's MapReduce section though and they're lost.

Conversely, Java developers often breeze through HDPCD programming tasks but struggle with the declarative query optimization required for Apache-Hadoop-Developer. Neither exam's objectively harder. They're hard in different ways depending on who you are.

Learning curve and certification sequencing

If you're planning to take both exams, starting with Apache-Hadoop-Developer makes sense for most people, I'd say. It builds foundational understanding of how data flows through Hadoop ecosystem tools. Then HDPCD expands that knowledge into lower-level programming and broader platform understanding.

Pass rate statistics? They suggest that candidates who spend time on actual hands-on projects before attempting either exam perform way better than those who rely solely on study materials. Not gonna lie, you can't cram your way through a performance-based exam the way you might with traditional certifications. Just doesn't work.

Folks commonly fail because of poor time management, not enough debugging practice, or knowledge gaps in specific tool features. Features that don't come up in typical development work but appear prominently in exam scenarios. The retake rate for both exams runs higher than vendor-neutral certifications, which tells you something about the rigor involved here.

Compared to Cloudera or cloud platform big data certifications from AWS and Azure? Hortonworks exams maintain a reputation for being more hands-on intensive. Industry folks generally view that as adding credibility to the credentials.

Career Impact of Hortonworks Certifications in 2026

what the credentials actually signal in 2026

Hadoop's "dead?" Nah. Only if you've never actually supported production clusters, honestly. Tons of enterprises still run HDP-based stacks because data gravity's a beast, pipelines are already paid for, and cloud migration drags on for years, not weeks. That's why Hortonworks Certification Exams keep popping up in hiring conversations throughout 2026, even with all the Spark, Lakehouse, and managed cloud services noise.

Hiring managers? They read Hortonworks certs as proof you can operate in the messy middle. Not just write clean code, but actually work inside YARN queues, bizarre HDFS permissions, Hive metastore weirdness, and production SLAs that matter. Experience trumps paper, sure. But when two candidates look similar on paper, certification becomes the differentiator. Especially for remote roles where trust gets built from resume signals and LinkedIn breadcrumbs.

roles that open up from developer certs

A Hortonworks developer credential lines up with specific titles. Hadoop Developer's the obvious one. Big Data Developer too. Data Engineer, sometimes. Analytics Engineer at companies still treating Hive as their semantic layer.

Most direct mapping? The Apache-Hadoop-Developer (Hadoop 2.0 Certification exam for Pig and Hive Developer) because it screams "I ship ETL on Hadoop." Pig and Hive skills still matter for batch processing, backfills, auditability, and those untouchable finance workflows where nobody dares change anything. ETL Developer roles keep appearing in banks, insurers, healthcare, retail. Log analytics, adtech reporting, manufacturing telemetry. All that unglamorous stuff that actually runs businesses.

Then there's HDPCD (Hortonworks Data Platform Certified Developer). That one fits teams wanting hands-on HDP platform comfort, not just query-writing ability. If you're targeting Data Engineer roles stressing Hortonworks ecosystem chops, HDPCD reads way stronger.

what job postings are asking for now

Big Data Developer postings in 2026? Still list the classics: Hive, HDFS, YARN, Kerberos, plus Python and some Spark mixed in. The "certification preferred" line appears constantly in enterprise reqs, way less in startups. Startups care if you can build fast and debug at 2 a.m. They'll trade certs for GitHub proof any day. Enterprises love checkboxes. Procurement loves 'em too.

Hadoop Developer role evolution's basically this: less greenfield MapReduce, more integration work. You're expected to understand orchestration (Airflow), data quality frameworks, and cloud adjacency because lots of shops are mid-migration, running hybrid setups where certified folks get pulled into planning. Project leadership opportunities emerge because you can translate old HDP jobs into new patterns without breaking audits or compliance.

I watched a colleague get promoted last year purely because she could bridge the vocabulary gap between the old guard who built everything in MapReduce and the new architects pushing Databricks. That translation skill? Underrated.

pay, mobility, and where the demand clusters

Hortonworks certification salary bumps? Real but uneven. Contractors and consultants can price higher when the client's stuck on HDP and needs someone tomorrow. Full-time roles pay more when the cert's paired with production war stories. Short sentence. Another one. Proof matters most.

Geographic hotspots still track big enterprise density: US finance hubs, government-adjacent regions, major EU banking centers. Remote work opportunities are solid for maintenance gigs, migration projects, and platform reliability work. Freelance and consulting markets stay steady because "nobody wants to own the old cluster" is a recurring theme nobody talks about.

resume, ATS, and linkedin tweaks that actually work

Put the cert in three places. Top summary. Skills section. And under a dedicated "Certifications" block with exam codes like HDPCD and "Apache Hadoop Developer Hortonworks exam." ATS optimization's boring but it works: include "Hortonworks HDP developer certification," "Hadoop 2.0 Pig and Hive developer certification," and "Hortonworks certification paths" if you've followed a big data developer certification roadmap.

One warning though. Don't stuff "Hortonworks exam practice questions and dumps" anywhere. That reads shady. Instead, talk about Hortonworks certification study resources and Hortonworks certification training materials, then point to an actual project. On LinkedIn, add the credential, pin a Hive optimization post, describe impact in numbers. Hiring managers want outcomes, not just Hortonworks exam difficulty ranking war stories that go nowhere.

Hortonworks Certification Salary Expectations and Compensation Analysis

What certified Hadoop professionals actually make in 2026

Okay, so here's the deal. The salary data for Hortonworks certified professionals in 2026? Honestly, it's scattered everywhere, but patterns definitely emerge when you dig into it. Entry-level Hadoop developers with certification typically start between $75,000 and $95,000 in the US market. Not exactly mind-blowing cash. Respectable for someone breaking into big data work, you know? The Apache-Hadoop-Developer credential actually gives you a legitimate edge here because employers recognize you can write Pig and Hive queries in real scenarios, not just regurgitate theory.

Mid-career Big Data Developers? We're looking at $110,000 to $145,000 depending on what you actually bring beyond the cert. Senior Data Engineers with Hortonworks credentials push into the $150,000 to $190,000 range. Sometimes higher if you're positioned in the right city and industry. I mean, these figures shift dramatically based on where you live and what sector you're working in.

Location makes a massive difference

San Francisco and New York remain the top-paying metros for certified Hadoop professionals. No surprise there. San Francisco can add 35-45% to base salaries compared to national averages, which is insane when you think about it. Seattle and Austin offer premiums too, maybe 20-30% above baseline, but honestly Austin's getting crowded lately and that premium's shrinking fast. A HDPCD certification holder in San Francisco might pull $160k mid-career while the same person in Dallas gets $115k for similar work. Same skills, wildly different numbers.

Europe shows interesting patterns that don't always make sense. London pays well but cost of living just devours it. Berlin and Amsterdam offer decent compensation with better quality of life trade-offs, which matters more than people admit. My cousin moved to Amsterdam last year and she's basically living better on less money, if that tells you anything. Asia-Pacific varies wildly. Singapore and Sydney pay competitively with US markets, but emerging markets like India and Philippines show $25,000 to $55,000 ranges even with certification, which feels low but reflects local economies.

The certification premium is real but contextual

Here's what nobody tells you straight, and the thing is, it's kind of important: certified versus non-certified Hadoop developers show about a 12-18% salary difference in most markets. Not exactly life-changing money. But that's $12,000 to $20,000 annually for mid-career folks, which adds up. The percentage increase jumps higher early career, maybe 20-25%, because you're proving baseline competency when you've got limited experience to show. Employers value that proof.

But certification alone won't carry you, let's be real. Company size matters hugely in ways most people underestimate. Big tech firms (think FAANG-adjacent) pay 30-40% more than mid-size companies for identical roles. Same job title, same responsibilities, totally different comp. Finance and technology sectors outpay healthcare and retail by similar margins. A certified developer at a major bank in New York might make $155k while someone equally skilled at a healthcare startup in Phoenix makes $95k, which seems unfair but reflects market realities.

Skills stacking multiplies your value

Cloud skills complementarity is where salaries really jump. I mean really jump. If you combine Hortonworks certifications with AWS or Azure expertise, you're looking at potential 25-35% salary bumps. Programming chops in Scala or Python alongside your Hadoop skills? Another 15-20% premium right there. Add Spark certification to your Hortonworks credentials and you become way more marketable. We're talking $20,000 to $35,000 additional compensation at senior levels, which changes your lifestyle options considerably.

Contract positions typically pay 1.5x to 2x the hourly equivalent of permanent roles, but you lose benefits and stability. That's a trade-off worth considering carefully. Remote work has created geographic arbitrage opportunities that didn't exist before. Some companies pay based on role rather than location, letting you earn SF wages while living in lower-cost areas. Honestly brilliant if you can swing it.

ROI calculation and career trajectory

Quick math here. Hortonworks certification exams cost around $250 to $350. If certification bumps your salary even $8,000 annually, you recoup that investment in about two weeks of work. Not gonna lie, that's pretty solid ROI by any measure. Over a five-year span, certified professionals typically see 40-60% total compensation growth versus 25-35% for non-certified peers in similar roles. Compounds into substantial differences.

Total comp packages matter beyond base salary, something junior folks often overlook. Certified professionals at established tech companies often see 15-25% of compensation come from bonuses and equity. Can dwarf salary differences. Startups offer higher equity percentages but with obvious risk factors that either pay off big or evaporate completely.

Best Study Resources for Hortonworks Certification Exams

start with the official stuff

Look, for Hortonworks Certification Exams, the fastest way to stop guessing is to read what Hortonworks actually tested people on. The official Hortonworks documentation plus the learning portal is where you get the "this is how HDP expects it to work" view, not some random blog post opinion.

The docs can feel dry. They're packed with the exact config flags, command behavior, and feature limits that show up when you're under time pressure on a practical exam, especially on developer tracks like the HDPCD exam (Hortonworks Data Platform Certified Developer) and the older Apache-Hadoop-Developer style objectives. Sometimes I'll skim a section three times before it clicks, then suddenly everything makes sense.

Also grab the Hortonworks HDP reference architecture documents. Those are gold for explaining why components are wired together the way they are. They help when a task is really an integration problem wearing a "write a query" costume. Skim first. Then reread with a pen or highlighter if you're digital.

apache docs beat most courses

Don't skip the Apache project documentation. Hadoop, Hive, Pig, Spark. Official guides. They're not pretty, but they're accurate, and accuracy matters more than vibes when you're trying to remember the exact HiveQL syntax for partitions or why your Pig UDF's failing on the classpath.

For the Apache Hadoop Developer Hortonworks exam type content (think Hadoop 2.0 Pig and Hive developer certification), the Pig and Hive docs plus the Apache wiki pages are your baseline. Community tutorials help, sure, but verify everything against upstream docs because outdated advice is literally everywhere. I've wasted hours on deprecated solutions that looked legit but weren't even close to current best practices.

paid training is fine, just pick carefully

Udemy, Coursera, Pluralsight all have decent Hortonworks certification study resources, but course quality swings wildly. Real talk. Look for ones that include actual labs, not just slides, and that cover exam-style tasks like loading data, writing transformations, debugging jobs, and interpreting logs.

Hortonworks official training courses and instructor-led options are expensive. They're the closest match to the "how the exam thinks" approach. If your employer pays, take it. If you're paying, compare it against your budget and your timeline, because a cheaper course plus lots of lab time often wins on ROI.

Bootcamps exist too. Some are legit. Some're total fluff. I tried one once that was basically recycled YouTube videos with a fancy landing page.

labs and environments that feel like the exam

Hands-on lab platforms matter more than another playlist. Start with Hortonworks Sandbox and build muscle memory: HDFS paths, Hive metastore quirks, Pig scripts, permission errors. Break it on purpose. Fix it.

Cloud-based practice environments like AWS EMR and Azure HDInsight are solid alternatives when your laptop can't handle a VM. They sneak in cloud skills that help with Hortonworks certification career impact and even Hortonworks certification salary conversations later. Docker containers are great for local dev, but Hadoop-in-Docker can get weird, so treat it as practice, not truth. Still useful. Virtual machine configurations that mimic exam constraints are closer to reality, though I've found that spinning up a full HDP cluster locally teaches you way more about what can break during testing than any sanitized tutorial environment ever will.

practice questions, ethics, and community help

Practice exam providers and mock test platforms help with timing and the Hortonworks exam difficulty ranking question, but evaluate quality: are explanations technical, are answers consistent with current docs, and do questions look like real tasks instead of trivia. About Hortonworks exam practice questions and dumps: dumps are an ethical mess and a fast way to learn the wrong thing, so I skip them and focus on legit mocks plus labs.

GitHub repositories with sample code and solutions are great for pattern learning. Stack Overflow's even better if you search by error message, then reproduce the fix in your own lab. For the Hortonworks community forum, don't just post "help pls". Post configs, logs, what you tried, and the smallest repro. Nobody's got time to debug your entire pipeline from a vague description.

quick resource stack and study plans

Books still help. Pick one Hadoop fundamentals book, one Hive-focused reference, and one hands-on HDP guide for the HDPCD (exam code varies by version, but the objective style's consistent). Add YouTube tutorial series for quick refreshers, plus cheat sheets for Pig Latin and HiveQL, and a flashcard system for commands, file formats, and syntax.

For schedules: two-week intensive's for experienced developers who already ship data pipelines, four-week balanced is for moderate Hadoop background, eight-week's for beginners. Time block 60 to 120 minutes weekdays, then do a longer weekend lab session where you run tasks with sample datasets, debug common errors, and do performance tuning exercises like partitioning, file sizing, and query plan checks. Use spaced repetition, active recall, a study journal, and track weak areas weekly. Pair with a study buddy. Find a mentor in Hadoop meetups. Office hours Q&A sessions help a lot. Sometimes a single good question answered live saves you days of confused Googling and trial-and-error debugging.

If you're choosing what to focus on, start with the exam pages: Apache-Hadoop-Developer and HDPCD.

Frequently Asked Questions About Hortonworks Certification Exams

Understanding the split between Apache-Hadoop-Developer and HDPCD

Here's the deal. The Apache-Hadoop-Developer exam zeros in on Pig and Hive development. You're cranking out scripts, fine-tuning queries, camping out in the data transformation layer where all the magic happens. HDPCD? Different animal entirely. It tests whether you can actually work through the entire Hortonworks Data Platform stack. We're talking YARN, MapReduce, the whole enchilada.

The Apache-Hadoop-Developer exam targets analysts and developers who spend their days writing Pig Latin and HiveQL. HDPCD's for engineers building end-to-end data pipelines. Career-wise, Apache-Hadoop-Developer gets you into BI-adjacent roles where you're supporting analytics teams. HDPCD opens doors to platform engineering positions where you're architecting solutions.

Making the choice between developer certifications

Ask yourself what you're doing right now. If you're already writing Hive queries daily and your job description says "data analyst" or "BI developer," the Apache-Hadoop-Developer path makes sense. You're validating skills you actually use.

But if you're managing clusters, troubleshooting YARN jobs, or your title includes "engineer," go for HDPCD. There's also the skill gap consideration. HDPCD requires deeper Linux knowledge and cluster architecture understanding, which can be intimidating if you're coming from a pure analytics background, but it's totally learnable. Your career goals matter too. Want to stay in the query optimization space? Apache-Hadoop-Developer. Want to move toward infrastructure and platform work? HDPCD every time.

Quick tangent: I've seen people try to split the difference by going for both certifications in reverse order of what made sense, thinking they'd somehow game the learning curve. Never works out.

Retake rules and what they cost you

You'll wait 14 days. That's the standard cooling-off period between attempts for most Hortonworks exams. Each retake costs the same as your initial attempt, which runs around $250 to $300 depending on the specific certification.

No discounts for retakes, unfortunately. Budget for at least one potential retry if you're being realistic. These are performance-based exams where you can't just memorize answers.

How scoring actually works

Performance-based evaluation means you're completing actual tasks in a live environment, not picking multiple-choice answers. You'll need around 70% to pass most Hortonworks certifications. They're scoring your deliverables. Did the Pig script produce correct output, does your Hive table match specifications, that kind of thing. Partial credit exists for some tasks but don't count on it.

What you can access during testing

You get access to official documentation. That includes Apache project docs for Hadoop, Hive, Pig, whatever's relevant to your certification during the exam. No Google, no Stack Overflow, no personal notes. Just the official references.

This is why practicing with documentation is key. You need to know where to find answers quickly.

When technical problems happen

Proctor chat's your lifeline. You can communicate with the exam proctor through a chat interface if something breaks. They can restart your exam session if there's a legitimate technical failure.

The clock usually pauses during major technical issues, but minor hiccups where you just need to refresh your browser won't necessarily get you extra time.

Getting your results back

Results typically arrive within 48 hours. Sometimes faster, occasionally up to 72 hours. Your digital certificate comes via email once you've passed.

Physical certificates? Used to be a thing but everything's digital now.

The Cloudera merger reality check

Not gonna lie, this is complicated. The Hortonworks brand got absorbed into Cloudera in 2019, and the certification space shifted in ways that still confuse people today, even though it's been years. The whole ecosystem just got reshuffled. Hortonworks certifications still appear on job postings and hiring managers recognize them, but Cloudera's pushing their unified certification track. For 2026, the value depends heavily on your market. If you're in an area with established HDP installations, they're still worth pursuing.

Certification expiration timelines

Most Hortonworks certifications don't technically expire. But the technology does. A certification from 2018 signals outdated knowledge in 2026.

Recertification isn't formally required but practically necessary every two to three years.

Conclusion

Getting yourself exam-ready

Look, I've watched countless folks waste months memorizing Hadoop concepts only to absolutely crash their Hortonworks exam because they didn't anticipate what's coming. The format matters as much as actually knowing your material.

That's where practice exams enter the picture, and honestly they're non-negotiable if you're really committed to passing. You've gotta get comfortable with question phrasing, time constraints, and those irritating edge cases they absolutely love hurling at candidates. Real talk. The Apache-Hadoop-Developer exam especially tests your Pig and Hive knowledge in approaches that standard tutorials simply don't address, which can throw you off completely if you're unprepared for their testing methodology and the specific scenarios they present. Knowing how to construct a basic Pig script? Sure, that's foundational. But optimizing join operations while the clock's ticking during actual exam conditions with limited resources and mounting pressure? That's a completely different beast.

The HDPCD certification pushes boundaries even further since you're working through the entire Hortonworks Data Platform ecosystem. You can't just improvise and pray your general big data knowledge somehow carries you across the finish line. I made that mistake once with a different vendor cert and spent the whole exam second-guessing myself on stuff I actually knew cold, just because the question format caught me off guard.

Check out practice resources at /vendor/hortonworks/ where you'll discover realistic exam simulations for both the Apache-Hadoop-Developer and HDPCD certifications. These aren't just random questions somebody slapped together. They actually mirror the exam structure and difficulty level you'll encounter.

Here's my approach if I were in your position. Start with practice exams early, definitely not the week before your test date. Take one as a baseline to identify where you actually stand versus where you think you stand (usually there's a noticeable gap, won't sugarcoat it). Then concentrate your studying on weak areas that surface. You should retake practice tests periodically to track improvement.

The big data job market's competitive right now. Having a Hortonworks certification isn't just a nice-to-have anymore. It's becoming expected for mid-level and senior positions. But only if you pass, obviously. So give yourself every advantage available, use the practice materials, and walk into that exam confident you've prepared the right way. You've already invested time mastering the technology. Don't let inadequate exam prep become the obstacle that prevents you from demonstrating what you know.