Informatica Data Quality 10 Developer Specialist Exam Overview

Look, if you're thinking about the Informatica Data Quality 10 Developer Specialist exam, you're probably already waist-deep in data profiling projects or building cleansing rules for some massive enterprise dataset. This certification isn't one of those fluffy resume-builders. It actually validates you can build production-grade data quality solutions using Informatica's IDQ 10 toolset, which honestly matters a lot when companies are drowning in bad customer data or compliance headaches.

Why this certification matters more than you think

Here's the deal. The thing about data quality work is everyone talks about it but few people know how to implement it properly. You can have the fanciest PowerCenter workflows or the most sophisticated MDM hub, but if your source data's garbage, everything downstream falls apart. The Data Quality 10 Developer Specialist certification proves you understand how to profile data to find issues, build standardization rules that work, create matching logic that doesn't generate thousands of false positives, and monitor quality metrics that business users can understand and act on.

Companies value this because bad data costs them real money. Not theoretical money. Like millions of dollars in failed marketing campaigns, regulatory fines, or botched customer experiences. When you hold this cert, you're signaling you can prevent those disasters.

What you'll prove you can do

The certification validates specific technical skills around the Informatica Developer tool. It's thorough, I'll give them that.

You need to show proficiency in data profiling, discovering patterns, anomalies, and quality issues across datasets. You'll need to demonstrate you can build cleansing transformations, implement parsing logic for addresses and names, create standardization rules that make sense for the business context, and develop matching algorithms that identify duplicates or related records without creating chaos.

But it's about dragging transformations onto a canvas. You also need to understand business rule implementation, exception handling workflows (because data quality issues never stop coming), and scorecard creation for monitoring. The exam tests whether you can translate business requirements like "we need clean customer addresses" into technical implementations that work at scale. Sometimes that means fighting with stakeholders who think data quality is just running a spell-checker.

Who should take this thing

This exam targets Data Quality Developers first and foremost, but I've seen ETL Developers, Data Engineers, and even Data Stewards pursue it. Makes sense, right? If you're building data pipelines and need to ensure quality at ingestion points, this certification's worth it. Data Analysts who want to move into more technical roles find it valuable. BI Developers who are tired of explaining why their reports have inconsistent numbers sometimes grab this cert to understand upstream quality issues better.

The sweet spot? 6-12 months hands-on.

Actually working with the IDQ Developer tool, not just watching tutorials. Building profiling tasks. Creating reference tables. Debugging why your standardization rules aren't firing correctly. Troubleshooting match performance issues. If you've completed one or two real data quality projects like customer data cleansing, address standardization for a CRM migration, or duplicate detection for a master data initiative, you're probably ready.

Career impact and what doors it opens

This certification increases your marketability. Significantly. Data quality specialists with proven Informatica skills command higher salaries than general ETL developers in most markets. I've seen job postings specifically requiring or strongly preferring this certification, especially in regulated industries like financial services, healthcare, and telecommunications where data accuracy isn't optional.

You also get recognized as a subject matter expert within your organization. When data quality questions come up in architecture meetings or project planning sessions, people start looking to you for answers. That visibility matters for career progression. The thing is, it creates momentum you can't buy.

The certification also positions you well for other Informatica credentials. If you already have PowerCenter experience, adding Data Quality creates a powerful combination since many enterprises use both tools together. The Data Quality 10: Developer Specialist Exam validates skills that complement MDM implementations and broader data governance initiatives.

How this fits into the bigger data quality picture

What separates this from something like the Data Quality 9.x Developer Specialist certification is the focus on version 10 features and capabilities. The platform changed significantly between versions, with better performance, upgraded matching algorithms, and tighter integration with Informatica's cloud offerings. Big improvements.

This certification's also distinct from administrator-focused credentials. Compare it to something like the PowerCenter Data Integration 9.x Administrator Specialist and you'll see the difference. Administrators focus on installation, configuration, and operational management while developers build the data quality logic and workflows. Both roles matter, but they require different skill sets.

Real-world applications that matter

The scenarios you'll work with aren't academic. They're what you'll face Monday morning when systems break or stakeholders complain about duplicate customer records showing up in reports for the third time this quarter. Customer data cleansing projects where you need to standardize names, validate email addresses, parse phone numbers into consistent formats, and flag suspicious entries. Address standardization initiatives where you're implementing USPS or international postal rules to make addresses deliverable and consistent. Duplicate detection projects where you're building match rules that balance precision and recall without requiring manual review of 50,000 potential matches.

Data migration validation's another huge use case. When companies move from legacy systems to modern platforms, they need to ensure data quality improves rather than degrades. Your skills in profiling source data, building transformation logic, and validating results become critical.

The enterprise context nobody talks about

Organizations pursuing data governance maturity or dealing with regulatory compliance like GDPR, CCPA, or BCBS 239 need certified professionals who can implement technical controls around data quality. You're not just building mappings. You're creating audit trails, implementing validation rules that support compliance requirements, and generating quality metrics that executives present to regulators.

Reality check here.

The certification also validates your understanding of data quality dimensions: accuracy, completeness, consistency, timeliness, validity, uniqueness. These aren't just buzzwords. They're measurable attributes that your solutions need to address, and the exam tests whether you know how to implement checks and controls for each dimension.

Integration with the broader Informatica ecosystem

IDQ doesn't exist in isolation. It integrates with PowerCenter for ETL workflows, MDM for master data management, Data Director for stewardship workflows, and Axon for governance and lineage. Basically, it's part of a whole ecosystem that most enterprises rely on for keeping their data infrastructure functional. Understanding these integration points matters because enterprise implementations rarely use just one Informatica product. If you're also familiar with PowerCenter Data Integration Developer concepts, you'll find natural synergies in how these tools work together.

Professional development and community access

Passing the exam gets you into the Informatica certified professional community. You gain access to forums, user groups, and networking opportunities with other practitioners. The certification badge works on LinkedIn and other professional profiles, which matters more than you might think when recruiters are filtering candidates. It's become part of the screening process whether we like it or not.

Not gonna lie, the certification also shows commitment to your craft. Data quality work can be tedious and underappreciated, but holding this credential shows you're serious about doing it right and staying current with best practices and platform capabilities.

Exam Details: Format, Cost, and Passing Score

What you're walking into (format basics)

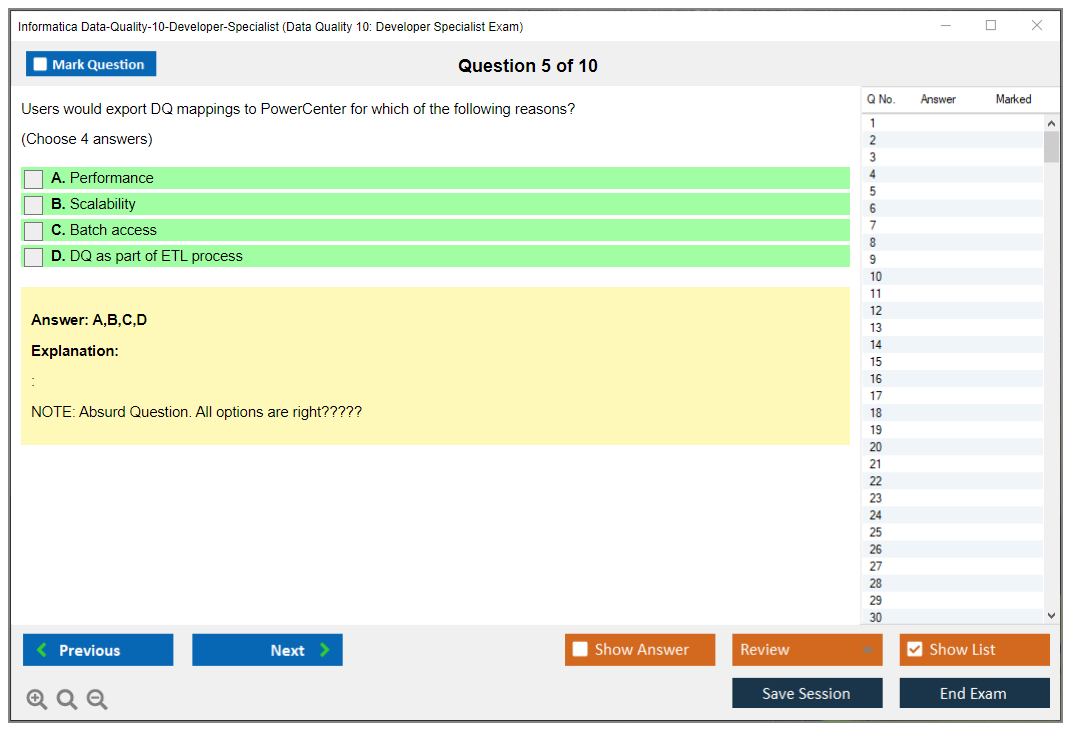

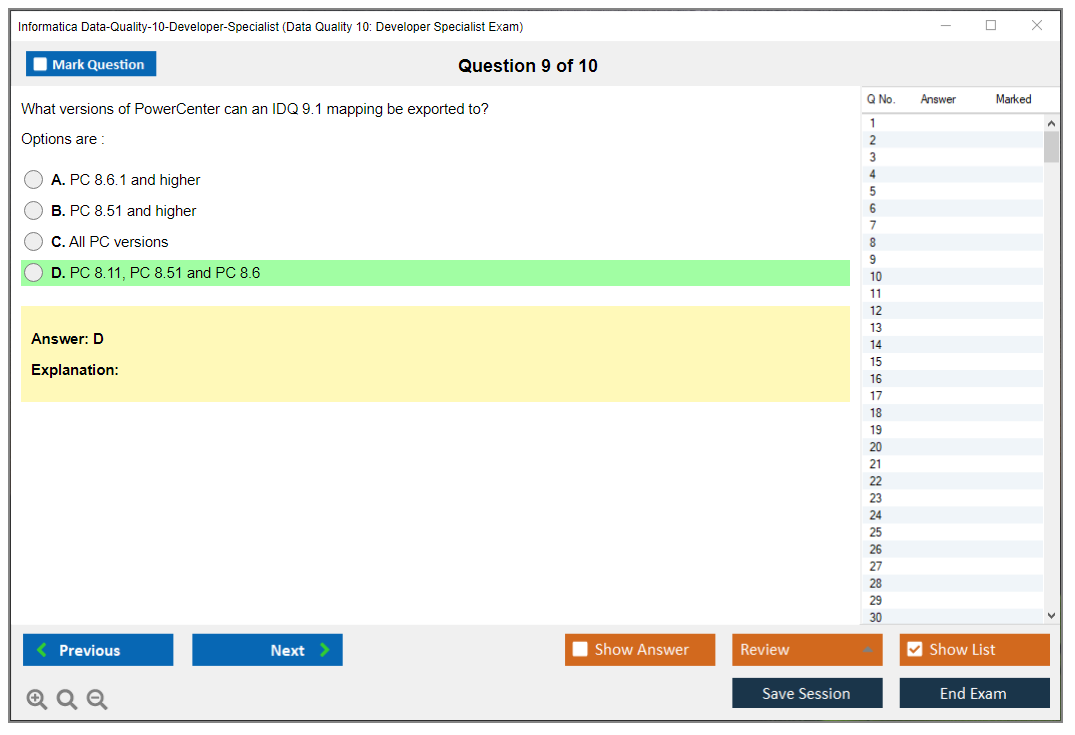

So here's the deal. The Informatica Data Quality 10 Developer Specialist exam isn't complicated on paper. Sixty questions, ninety minutes, mostly multiple choice plus these scenario things where you're reading a mini story about some data problem and figuring out what you'd actually do in IDQ. No breaks, and honestly, that last part? It matters way more than you'd think going in.

Sixty questions is pretty typical for this exam code and designation, usually listed as Data-Quality-10-Developer-Specialist (sometimes you'll see a very similar Informatica naming pattern, but it's basically the same idea). The clock's real. Ninety minutes sounds comfy until you hit a scenario question that name-drops IDQ mappings, rules, and scorecards, and suddenly you've been staring at the same four answer choices for two minutes straight because two of them are both "technically plausible" and your brain's just stuck there.

Short exam. Fast pace.

Read carefully, I mean it.

Question types you'll see

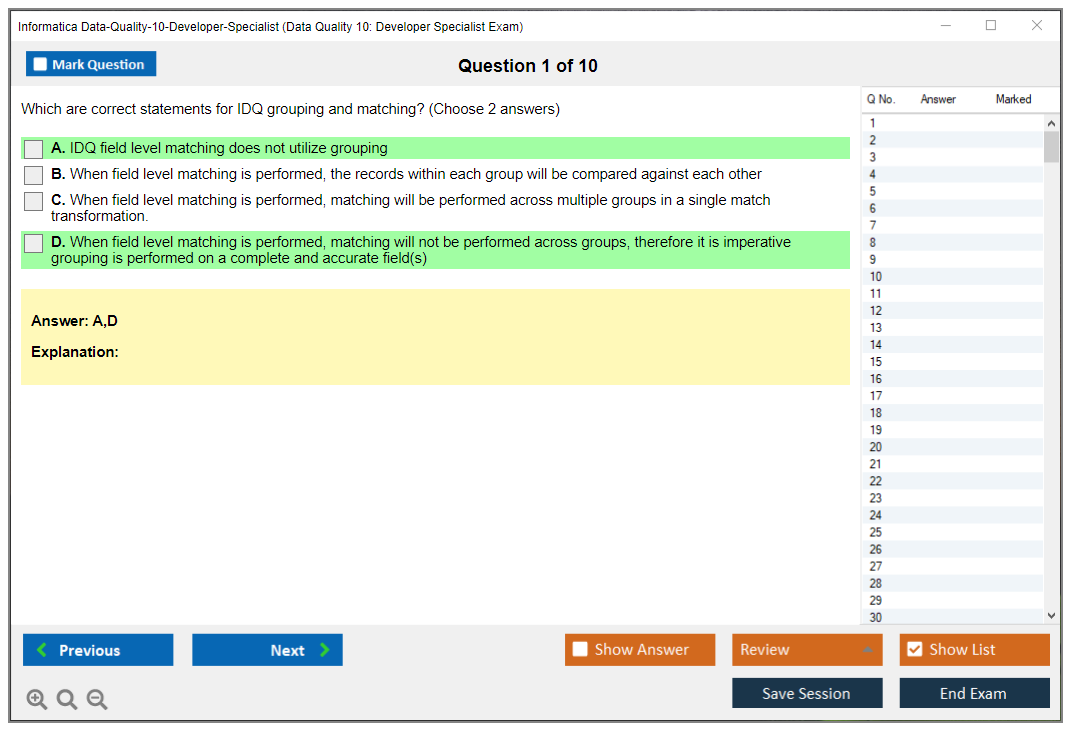

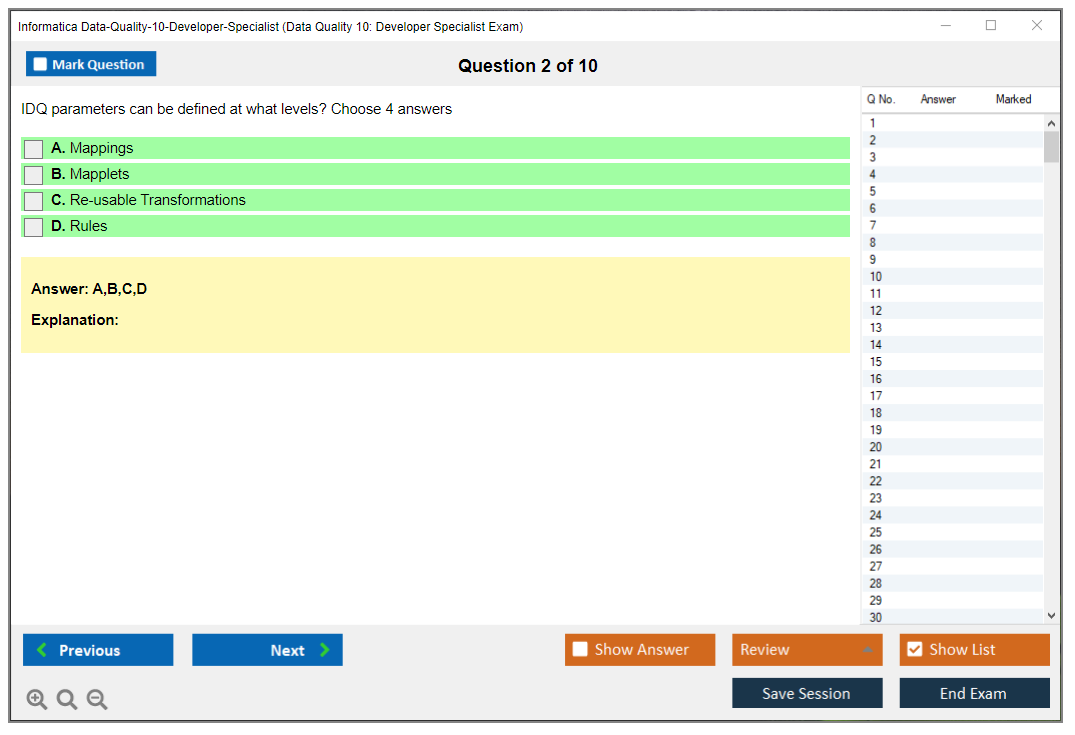

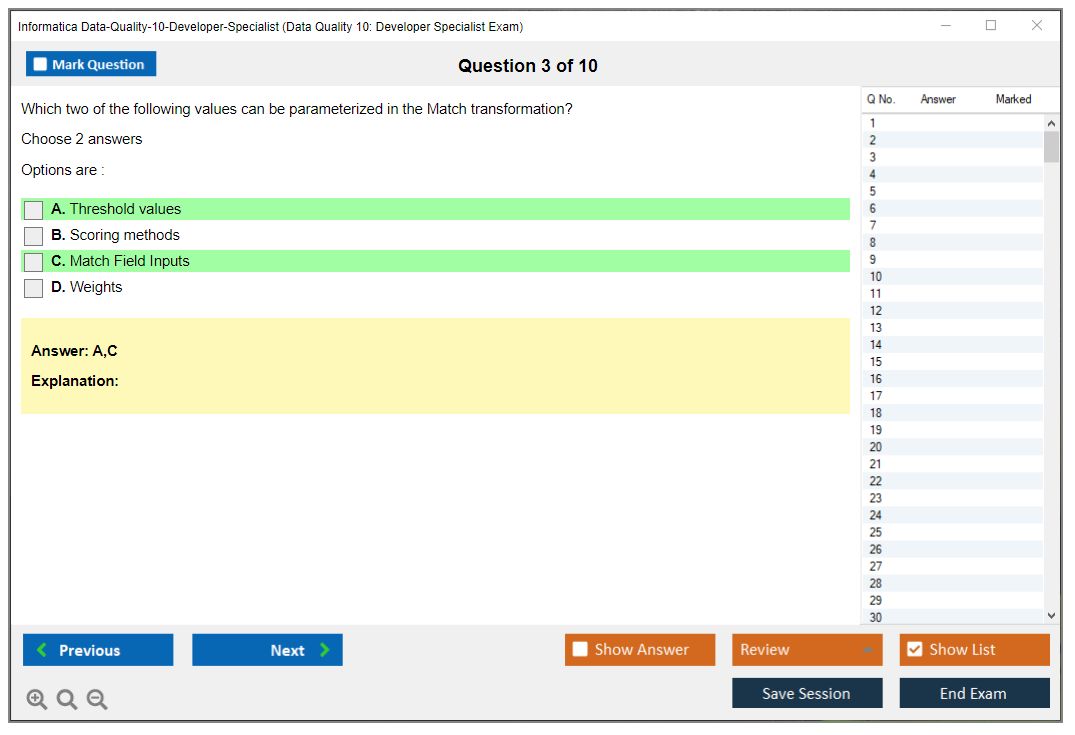

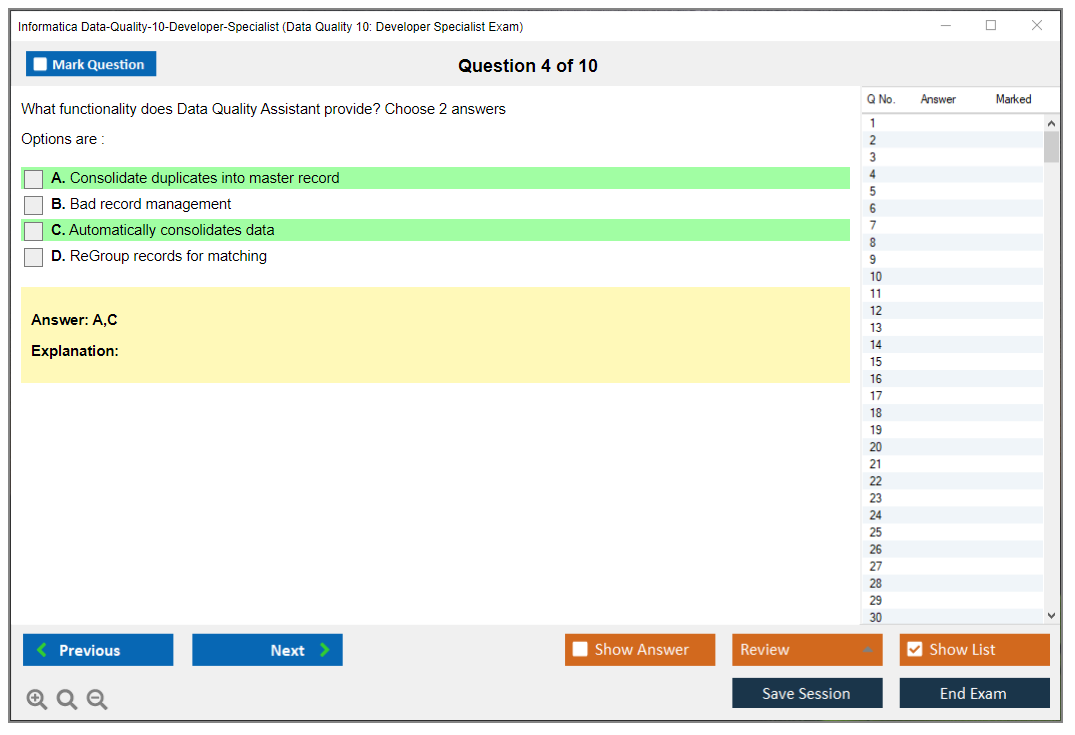

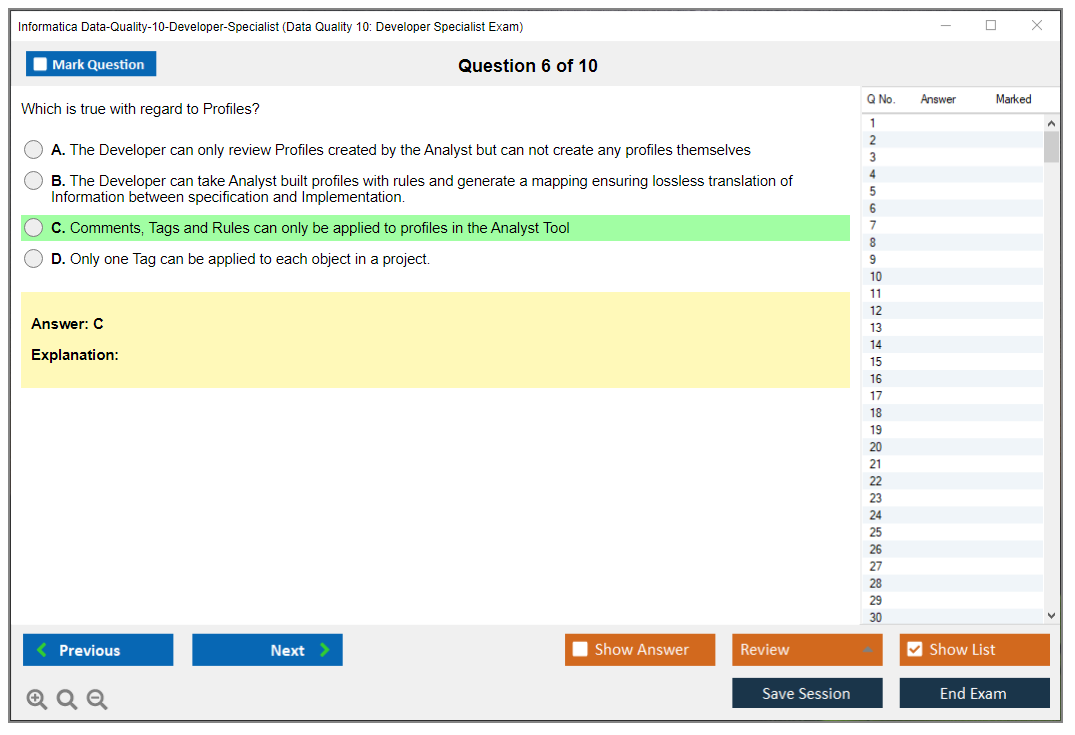

Look, Informatica loves multiple choice for certifications, but it's not only "what does this button do" stuff. The exam mixes a few different approaches:

- single-answer multiple choice (pick one)

- multiple-answer multiple choice (pick two or three, and yes, you can miss the whole thing if you miss one selection)

- scenario-based problem-solving, which are mini use cases around data profiling and cleansing in Informatica, validation and exception handling, deployment choices, that kind of thing

I'll explain the scenario ones because that's where people get tripped up. You'll get a situation like "incoming customer records have inconsistent address formats and duplicates across sources" and then you're choosing a best-fit approach using the Informatica Developer tool (IDQ), maybe involving parsing or standardization and matching, maybe deciding where exception records go, maybe which rule type or transformation pattern fits. It's not asking you to write anything. It's asking if you've actually built this stuff or only read the Informatica Data Quality 10 study guide once and hoped for the best.

Multi-answer questions?

They're the other landmine. They look easy but they're not, and if you haven't practiced reading for "select all that apply" cues, go find an Informatica Data Quality Developer Specialist practice test and train your brain to slow down on those because they'll wreck your score fast.

Timing rules (and the no-break reality)

You get 90 minutes total. No breaks permitted during that window, not "no scheduled breaks" like some exams where you can technically stand up. Once you start, you're in it, and the proctoring rules (especially online) treat leaving the camera as a problem.

Three short tips.

Eat first. Use the restroom first. Silence everything.

Also, pace yourself with intention because 90 minutes for 60 questions is about 1.5 minutes per question, but scenario-based items can run longer, so you want a strategy like "first pass in 45 to 55 minutes, second pass for flagged questions" because if you do slow thinking on every item you will end up speed-clicking the last ten and then blaming the exam for being "tricky" when really it was just time management.

My cousin took this exam twice, actually. First time he spent like eight minutes on a single scenario trying to logic through every edge case and ended up guessing on the last fifteen questions. Second time he moved faster, flagged the hard ones, came back later. Passed easily. Same person, same knowledge, just different clock strategy.

Where you can take it (test center vs online)

Delivery's through Pearson VUE. You can take it at a Pearson VUE test center or as an online proctored examination. Both are valid, both end with a pass or fail result, and both have their weird quirks.

Test centers have boring advantages that are worth money if you're anxious about tech. Controlled environment, fewer technical issues, and you usually get an immediate score report right after you finish. The room's set up for exams, the computer's locked down correctly, and the proctor's seen every weird situation already so they're not gonna freak out if you need to clarify something.

Online proctoring is convenient, but it's picky. Requirements usually include a webcam, microphone, stable internet connection, a clean workspace, and a government-issued ID, and not "ID nearby," like you literally show it to the camera. You also do a system check, a room scan, identity verification, and then continuous monitoring while you test, and if your camera drops or you keep looking off-screen because you're thinking, you can get warned. Not gonna lie, some people find that more stressful than the exam content itself.

Check-in timing's different too. Plan to arrive 15 minutes early for a test center appointment, but for online proctoring, budget 30 minutes because check-in can be slow, and if you're late you can get marked a no-show which, yeah.

Cost, regional pricing, and how people actually pay

The Informatica Data Quality 10 exam cost is usually in the $150 to $200 USD range, depending on region and currency. North America pricing often sits in one band, while EMEA, APAC, and LATAM can have different fee structures. Sometimes because of taxes, sometimes because of local currency conversion, sometimes because Pearson VUE pricing tables are just Pearson VUE pricing tables, I don't know.

Payment methods tend to include credit cards and debit cards for individuals, plus purchase orders for corporate bookings. If your company's paying, ask if they want you to use a PO because that changes how you schedule and how fast it gets approved.

Voucher and discount options pop up more often than people realize, the thing is. Informatica training bundles sometimes include an exam voucher at a reduced total cost. Partner programs can issue discounts, and corporate volume discounts are a thing if a team's getting certified at once. Promos during events, training subscriptions, occasional regional campaigns, all that stuff exists.

Exam vouchers typically have an expiration, a common pattern's 12 months validity from purchase date. Don't buy it "to motivate yourself" unless you actually like deadline pressure because that can backfire.

Retakes: rules, waiting period, and what it'll cost you

If you fail, retakes aren't discounted by default. Each retake attempt typically requires paying the full exam fee again, and there's also usually a waiting period of about 14 days after a failed attempt before you can reschedule.

Unlimited attempts are generally allowed, but every attempt costs money and you still have to respect the waiting period. I mean, nobody's stopping you from taking it six times, but your wallet might.

Passing score and how scoring works

The Data Quality 10 Developer Specialist passing score is typically 70%, which works out to 42 out of 60 correct if the exam's in that standard 60-question form. Pass or fail only. No grade levels, no "with honors," you either cleared the line or you didn't.

Scoring methodology's usually simple: correct answers only, no partial credit, and no penalty for wrong answers. That means if you're stuck, you should guess rather than leave it blank because blank's still wrong. Multi-answer questions are still all-or-nothing in most setups, so if you pick three options and only two are right, you're not getting "2/3 credit." You're getting zero for that item which feels harsh but that's how it works.

Also worth knowing: public-facing credentials generally don't disclose your numeric score. Your badge or certificate says you passed.

That's it. Internally, you'll see a score report with domain breakdown but that's not on your LinkedIn badge or anything.

Score reporting and what you receive afterward

Score reporting depends on delivery method. Test centers often give immediate preliminary results right after you end the exam. Like, you click "end exam" and boom, pass or fail shows up. Online proctored exams can take 24 to 48 hours for results to finalize because the session may go through additional review.

Then you get the official stuff. Expect a digital certificate and a detailed score report via email within about 5 to 7 business days. The score report breakdown usually shows performance by exam domain or objective area, which is helpful if you're mapping your weak spots back to the Informatica Data Quality 10 exam objectives like profiling, rules development, exception handling, scorecards, and deployment considerations.

Scheduling, rescheduling, and the rules that bite people

Scheduling's the normal Pearson VUE flow: create an account, register for the exam, select date or time or location (or online), then pay or apply a voucher. You'll get confirmation emails and reminders with the appointment details and usually some prep tips that are moderately useful.

Cancellation and rescheduling's typically allowed up to 24 to 48 hours before your appointment. Inside that restricted window, you may forfeit part or all of the fee. No-show policy's harsher: if you don't appear, you usually lose the full exam fee which sucks but it's standard.

Corporate and group booking options exist if an organization's certifying a batch of developers. That's where you can sometimes get volume discounts and dedicated scheduling support, which is honestly nice when you're trying to get ten people through the same exam month without scheduling chaos.

Rules on ID, accommodations, and what you can't bring

Identification requirements are strict. Bring a government-issued photo ID with signature, like a passport or driver's license (national ID works in many places). The name on your ID must exactly match the name on your exam registration, and yes, middle names and hyphens can matter which seems petty but they will turn you away.

Special accommodations are available for candidates with disabilities or special needs, but you request them in advance through Pearson VUE's process. Don't wait until the week of because approval takes time.

Prohibited items: phones, watches, notes, bags, calculators, reference materials. At a test center, they'll store your stuff in a locker. Online, you're expected to have a clear desk and they're watching. Scratch paper and calculator are handled differently: online exams often provide digital scratch space and a digital calculator, while test centers may provide physical scratch paper or an erasable board depending on site policy.

You also sign a non-disclosure agreement (NDA) before the exam begins, it bans sharing specific exam content. So yeah, talk about concepts like "data quality validation and exception handling" all day, but don't post "question 12 asked X and the answer was Y" because that violates the NDA and can get your cert revoked.

One more thing: beta exams sometimes appear when Informatica updates versions, and they can be cheaper. If you see a beta option for the Informatica IDQ 10 Developer Specialist track, double-check the exam version and platform notes so you're registering for the correct 10.x line and not accidentally testing on new objectives you didn't study, which, wait, actually that might be a separate exam code entirely, check that before you pay.

Informatica Data Quality 10 Exam Objectives and Core Domains

I've been working with Informatica products for years now, and the Data Quality 10 Developer Specialist exam is one of those certifications that actually matters in the real world. Not gonna lie, when I first looked at the exam objectives Informatica published, I felt overwhelmed by how much ground they cover. Though maybe that was partly me psyching myself out before really digging in.

What Informatica officially expects you to know

The official exam objectives serve as your authoritative study blueprint. Really. Don't skip reading through them multiple times because they're literally telling you what's on the test. Informatica breaks down the Informatica Data Quality 10 Developer Specialist exam into several core domains, each weighted differently. Understanding those weights is key for how you allocate study time.

Look, if one domain represents 30% of the exam and another represents 10%, you need to spend three times more effort on that heavier domain. Seems obvious but I've seen people spend equal time on everything and then struggle with the high-weight sections. Totally avoidable mistake. The domain weighting isn't just academic. It directly impacts your score.

Platform architecture and how everything connects

You need to understand the Informatica Data Quality 10 platform architecture inside and out. This means knowing how the Informatica domain works, what application services do, and how the Data Integration Service ties everything together. The thing is, these components interact in ways that aren't always intuitive until you've actually built a few solutions yourself. The IDQ Developer tool interface is your primary workspace, so knowing workspace organization and project structure matters a lot.

One thing that trips people up is understanding the relationship between the Developer tool, Analyst tool, and Administrator tool. They're not interchangeable. Each has specific purposes and the exam will test whether you know which tool does what. The Developer tool is where you build mappings, mapplets, rules, scorecards, and workflows. These are your core data quality components.

Reference data management comes up constantly in real DQ projects. You'll work with lookup tables extensively, and the exam covers how to integrate them into data quality processes. Metadata management through the Model Repository Service is another area that sounds dry but is actually critical for understanding how IDQ tracks and manages all your objects.

Integration points you can't ignore

IDQ doesn't exist in a vacuum. Integration with PowerCenter, MDM, and other Informatica products is a significant exam topic. Most enterprise environments run multiple Informatica tools, so knowing how they work together is practical knowledge, not just exam trivia.

The data quality dimensions are accuracy, completeness, consistency, validity, timeliness, uniqueness. They form the conceptual foundation. You'll need to understand data quality metrics and KPIs. How to measure them. How to communicate them to stakeholders who may not have technical backgrounds but need to make decisions based on your findings. Business glossary integration and business term associations show up in scenarios where you're mapping technical data elements to business concepts.

Version control and project management within the Developer tool is tested more heavily than you'd think. Repository concepts like folders, projects, labels, object dependencies are foundational. This stuff seems basic until you're troubleshooting a deployment issue at 2 AM. Deployment architecture considerations, how you move objects from development to test to production, that's real-world stuff that absolutely appears on the exam.

I once spent a whole weekend rolling back a botched deployment because I didn't properly understand object dependencies. Learned that lesson the hard way, and the exam definitely tests your knowledge there.

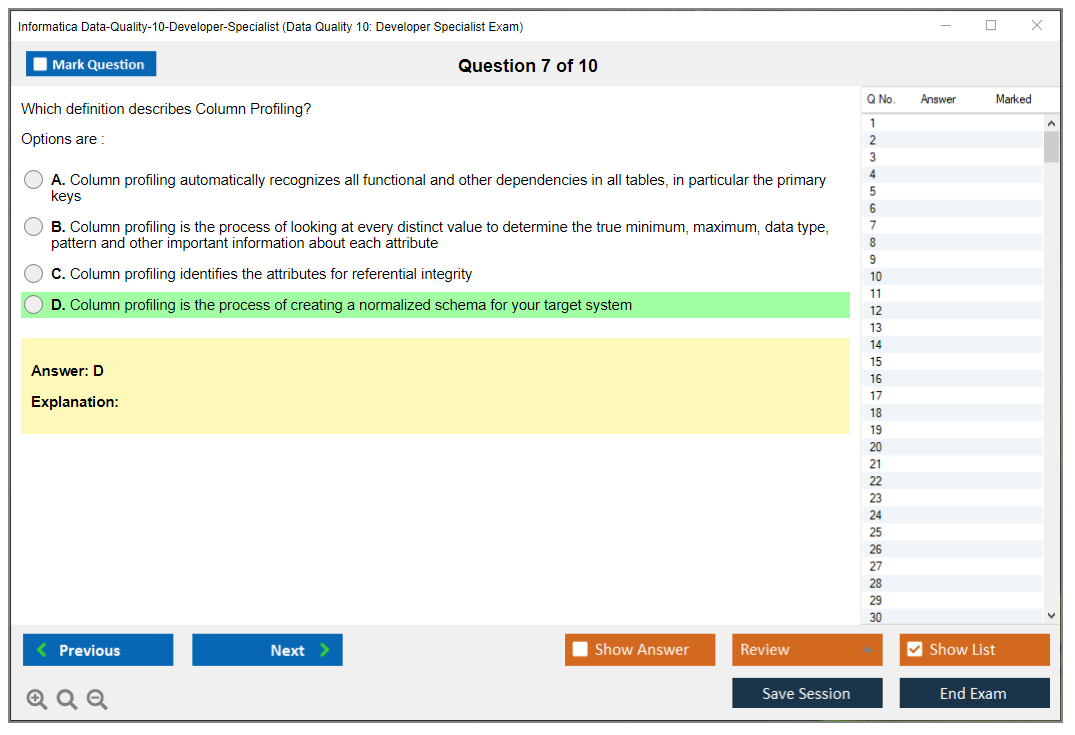

Profiling is huge on this exam

Seriously huge.

Profile creation and configuration for source data analysis takes up a substantial portion of the exam objectives. Column profiling covers data type inference, pattern detection, null analysis, value distribution. You need to interpret profiling statistics: min/max values, averages, standard deviation, distinct counts. The kind of statistical analysis that reveals hidden data quality issues you wouldn't spot just by looking at sample records. Pattern frequency analysis helps you identify data formats and anomalies, which is necessary for understanding data before you cleanse it.

Functional dependency analysis between columns and tables, primary key and foreign key discovery through profiling, duplicate detection during the profiling phase are all tested. The profiling drill-down capabilities let you do detailed value analysis, and you need to know when and how to use them.

Profiling warehouses store your profiling results for later reference. Enterprise discovery for multi-table and cross-system profiling is more advanced but definitely appears in exam scenarios. Performance optimization for large datasets matters because in production you can't just profile everything all the time. Sampling strategies like random, stratified, and systematic sampling become important.

Profiling scorecards track data quality metrics over time. You need to understand how profiling results integrate with data quality rules and how to use profiling filters and constraints to focus your analysis on specific data subsets.

Rule development is where the rubber meets the road

Rule specification creation using the Developer tool is a major exam domain. You'll encounter different rule logic types. Validation rules. Standardization rules. Matching rules. Consolidation rules. The expression language for rule development uses Informatica transformation language, which has its own syntax and functions you need to memorize, or at least understand well enough that you can work through syntax during the exam.

Built-in data quality transformations are Parser, Standardizer, Match, Consolidation. They're heavily tested. Custom transformation development using Java or expression language is more advanced but shows up in complex scenarios. Creating reusable rule logic through mapplets and rule specifications is considered a best practice and the exam rewards you for knowing this approach.

Rule parameters and variables give you flexible rule implementation. Validation rules cover format validation, range checks, cross-field validation, referential integrity checks. Translating business rules from business requirements to technical implementation is a skill the exam tests through scenario-based questions. This mirrors what you do every day on real projects anyway.

Rule testing and debugging techniques within the Developer tool, rule version management, impact analysis all appear. Complex rule logic involving conditional processing, nested rules, multi-step validation requires you to really understand how transformations chain together. Error handling within rules, exception routing, error codes, error descriptions, is critical for production-quality solutions.

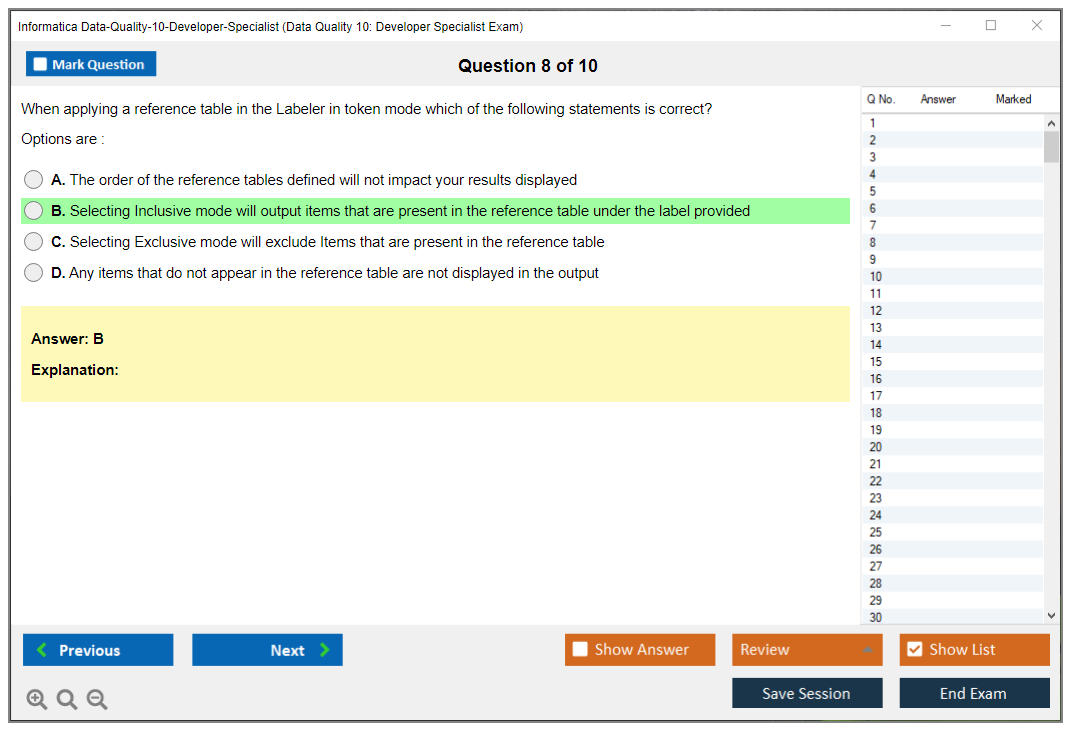

Parsing and standardization transformations

Parser transformation configuration for name and address parsing uses reference tables with name patterns, business patterns, address formats. Country-specific parsing rules and international address handling get tested, especially if you're working with global datasets. Which is increasingly common these days. You need to know parser output ports and how to map parsed components to downstream transformations.

Standardizer transformation setup uses standardization reference data like postal databases, geocoding tables, business directories. Address validation and certification (CASS, SERP, AMAS) are specific standards you should know. Acronyms I initially found confusing but they're actually quite logical once you understand what each certification does. Name standardization covers personal names, business names, salutations, suffixes.

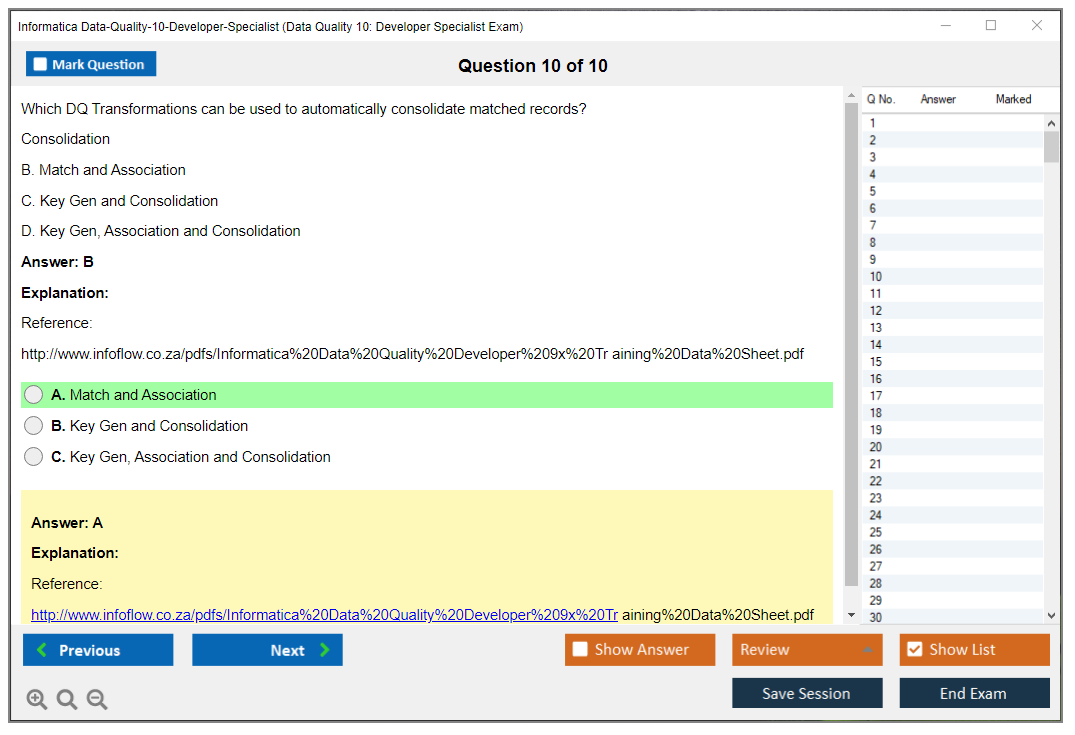

Matching and consolidation are exam-critical

Match transformation configuration for duplicate detection is a massive topic. Match strategies include exact match, fuzzy match, phonetic match. Each has its place depending on your data characteristics and business requirements. You define match rules and match purposes, then configure match scoring and thresholds to determine what constitutes a duplicate.

Consolidation transformation creates golden records from duplicates. Survivorship rules select the best values during consolidation, like choosing the most recent address or the most complete phone number. Match and merge workflows combine everything for thorough duplicate management.

Identity resolution workflows for customer data integration are common real-world scenarios. Household and relationship matching for hierarchical data structures adds complexity. Real customer data is messy and hierarchical relationships aren't always cleanly defined in source systems. Performance tuning for match and cleansing operations on large datasets is required knowledge because matching can be resource-intensive.

Exception handling and monitoring

Exception handling architecture in data quality workflows determines how you route validation failures. Exception routing based on rule violations. Exception tables and schema design for capturing rejected records. Error codes and error descriptions. All tested.

Scorecard creation and configuration for data quality measurement ties back to those data quality dimensions we talked about earlier. You define scorecard dimensions and metrics, set up threshold-based alerting, create data quality dashboards for visualization. Monitoring data quality trends over time using scorecards is how you prove ROI to management. Without good scorecards, it's tough to demonstrate the value of your DQ work.

Integration with Informatica Data Director for exception management, workflow monitoring and logging, operational dashboards for tracking job execution, session logs and debugging are operational topics that appear frequently. Data lineage and impact analysis for data quality processes help you understand dependencies.

Performance and deployment considerations

Performance optimization techniques for data quality mappings include partitioning strategies for parallel processing. Pushdown optimization to use source database capabilities. Caching strategies for lookup and reference data. Techniques that can make the difference between a mapping that runs in minutes versus hours on large datasets. Resource configuration for Data Integration Service affects how jobs run.

Deployment best practices from development to production. Import and export of data quality objects. Parameterization for environment-specific configurations. Version control integration. Change management. All part of the deployment domain. Testing strategies for data quality solutions and production monitoring and maintenance considerations round out the exam objectives.

If you're serious about passing, check out the Data Quality 10: Developer Specialist Exam practice materials because hands-on practice with realistic questions makes a huge difference. The exam objectives are thorough, but focusing your study time proportional to domain weights is the smart approach. You might also look at related certifications like Data Quality 9.x Developer Specialist to understand version differences or PowerCenter Data Integration 9.x:Developer Specialist if you're working with both products.

The exam isn't easy but it's fair. It tests what you actually need to know to build production data quality solutions.

Prerequisites and Recommended Experience for Data Quality 10 Developer Specialist

The Informatica Data Quality 10 Developer Specialist exam is one of those certs where the name sounds narrow, but honestly, the expectations are.. broad. You're getting tested on how you build, debug, and ship IDQ work. Not how well you can recite definitions.

Short version? Build stuff first. Then sit the exam.

What this certification really proves

This credential maps to the day job of an Informatica IDQ developer: think data profiling and cleansing in Informatica, designing IDQ mappings, rules, and scorecards, and pushing projects through environments without breaking everything at 2 a.m. It also quietly checks if you understand how Informatica's platform pieces fit together, because nobody builds Data Quality in a vacuum.

Roles it lines up with: IDQ developer, data quality engineer, data integration developer who got handed 'clean the customer data' and now owns it forever.

Analysts sometimes take it too. That's fine. But the exam isn't written for slide-deck people. I mean, it really assumes you've been in the trenches.

Official prerequisites, and what that means in real life

Here's the funny part about Data Quality 10 Developer Specialist prerequisites. Informatica does publish recommended prep and training, and they describe the knowledge you should have, but there's typically no hard gate blocking you from registering.

No mandatory prerequisites.

Seriously. The exam's open to anyone willing to pay the fee and attempt the certification, so when someone asks 'am I eligible,' the answer's basically yes, if your payment method works.

That said, 'eligible' and 'ready' aren't the same thing. This exam rewards muscle memory inside the Informatica Developer tool (IDQ), and that only comes from building real solutions, failing, fixing, redeploying, and repeating until it feels normal.

Hands-on time that actually moves the needle

If you want a realistic baseline, plan for 6 to 12 months of hands-on work with Informatica Data Quality 10 Developer. Could a sharp person do it faster? Sure. But most people who pass comfortably have spent months doing daily IDQ work, not just watching training videos on weekends.

Practical experience beats theoretical knowledge here. You can memorize what a Scorecard is (cool), but the exam'll still hit you with 'what should you do when the rule fires exceptions, the mapping fails in test, and the business wants a metric by Monday,' and that's not something you learn from a glossary.

Three short truths. Practice matters. Projects matter more. Theory's secondary.

Recommended training (and why it helps)

Informatica's recommended path usually points at the Informatica Data Quality 10.x Developer course, either instructor-led or self-paced. Official duration's typically 4 to 5 days for the full developer training. Look, that's not because it takes five days to explain Parser and Match. It's because you need time in labs to build end-to-end flows and trip over the same problems you'll see at work.

The good news? Training topics typically align pretty cleanly with Informatica Data Quality 10 exam objectives: architecture and components, profiling, rule development, transformations (Parser, Standardizer, Match, Consolidation, Expression), exception handling, scorecards, deployment, and basic performance considerations. If you like structured learning, it's a solid way to cover the surface area without missing a domain.

But.

Training isn't magic. If you take the course and then don't touch IDQ for a month, the exam'll feel harder than it should. There's also the psychological angle people underestimate. You can know a tool backward and forward, but exam pressure can make you second-guess decisions you'd normally make instantly. I've watched people who build profiling flows daily freeze up on a straightforward question just because the clock's ticking and the wording's slightly unfamiliar.

The legit alternative: self-study plus a lab

If your employer won't pay for training, self-study's absolutely workable. Documentation, tutorials, and hands-on practice can get you there, as long as you're disciplined about building real artifacts. You should be comfortable reading product docs and release notes, not just blog posts, because version-specific behavior matters and version 10.x has its own quirks versus earlier releases.

You'll also want access to a lab environment.

A real one.

Not 'I watched someone else click around.' Options I see people use:

- employer-provided IDQ environment (best, because it's closer to reality)

- Informatica trial if available in your region and licensing situation

- cloud-based lab subscriptions (good when you just need something now)

Foundation skills you should already have

IDQ isn't a beginner tool, even if the UI looks friendly, and frankly the learning curve gets steep fast if you're missing foundational data concepts.

You should understand data warehousing basics, ETL concepts, and data integration principles, because IDQ flows behave like integration pipelines with data quality logic layered in. SQL proficiency matters too. You don't need to be a query wizard, but you must be able to write and read SQL for data analysis and validation, especially when you're verifying profiling results or checking match outcomes.

Data modeling knowledge helps a lot: relational design, normalization, entity relationships, keys, and why duplicates happen in the first place. Also know core data quality concepts like accuracy, completeness, consistency, validity, and timeliness. Not as buzzwords. As things you can measure and argue about with the business.

You should have basic familiarity with Informatica platform architecture and services, plus basic Windows or Linux navigation for installs, services, logs, and configuration files. And yes, database connectivity experience matters: connecting to Oracle, SQL Server, DB2, Teradata, and dealing with drivers, permissions, schemas, and 'why does it work in dev but not test.'

Projects to complete before you schedule the exam

I'm opinionated here. Do at least 2 to 3 complete data quality projects before attempting the exam.

Not half projects.

Complete. Requirements to deployment.

Project types that map well to exam prep:

- customer data cleansing (names, phones, emails, survivorship decisions)

- address standardization using reference data like postal tables

- duplicate detection and merge using match rules and consolidation

Make sure you've touched all the major transformations: Parser, Standardizer, Match, Consolidation, Expression. You should have rule development experience too, including validation rules, standardization rules, and match rules (fragments, build rules, break rules, fix rules).

Profiling's non-negotiable.

You need practice conducting profiling on real datasets and interpreting results, not just running a profile and screenshotting it. Same with scorecards: build and configure them so they actually measure something meaningful, then wire exceptions into an exception handling workflow, because data quality validation and exception handling is where many implementations get messy.

Deployment experience matters more than people think. Moving from dev to test to prod exposes parameter files, connections, permissions, and scheduling realities, and troubleshooting skills come from this: debugging failed mappings, investigating data quality issues, and doing basic performance tuning when a match job explodes in runtime.

Also, don't ignore business requirement analysis. The exam leans practical, and translating 'we need better customer quality' into technical rules, thresholds, and exception processes is a core IDQ developer skill. Reference data management shows up here too, like postal databases, standardization tables, and lookup datasets that drive consistent outcomes.

Integration experience helps if you've connected IDQ with PowerCenter, MDM, or other Informatica components. Not always required for every question, but it's part of how IDQ's used in real orgs.

Practice datasets and lab prep

Use public datasets so you can practice without waiting on approvals. Customer and address-like datasets are perfect, plus product catalogs for standardization.

You want messy, inconsistent data. That's the point.

Time investment's usually 40 to 80 hours of study and hands-on practice depending on where you're starting. If you already build IDQ daily, you're closer to the low end. If you're new and relying on self-study, you're closer to the high end, and you should budget extra time for lab setup pains.

A study group or mentor helps a lot. Not mandatory, but having an experienced IDQ developer sanity-check your match strategy or explain why your exceptions design'll annoy ops is worth its weight in gold. Participating in the Informatica community, forums, and user groups also helps, mostly because you learn what breaks in the real world.

Gap analysis and readiness checks

Before you schedule, do a gap analysis against the published exam objectives and your own comfort level. If you can't explain, build, and troubleshoot the core flows, you're not ready, even if you've read every Informatica Data Quality 10 study guide you could find.

If you like practice-first prep, I'm fine with using a targeted question pack as a readiness check. Just don't treat it like a cheat code. The Data-Quality-10-Developer-Specialist Practice Exam Questions Pack can help you validate coverage and timing, and it's cheap enough at $36.99 that a lot of people use it after they've built labs and want to see where they're weak. I mean, use it like a mirror, not a crutch. Same link again when you're at the final-week stage: Data-Quality-10-Developer-Specialist Practice Exam Questions Pack.

Quick notes people always ask about

What's the Informatica Data Quality 10 exam cost? It varies by region and delivery method, and Informatica changes pricing, so check the current listing when you're ready to register. What's the Data Quality 10 Developer Specialist passing score? Informatica typically reports scores scaled by exam form, so 'passing' is a threshold, not a fixed percent you can game.

How hard is it? Intermediate if you've built real IDQ solutions, painful if you've only studied theory.

Also, plan your certification path. Some folks pair this with an Administrator cert either alongside or after, especially if their job bleeds into installs, services, and environment support. And keep an eye on the Informatica certification renewal policy, because versioned products change and Informatica sometimes updates requirements when new versions or new exams drop.

Last thing.

Career readiness. If you pass the exam but can't deliver a production-ready data quality workflow with profiling, rules, scorecards, exceptions, and deployment discipline, hiring managers'll figure that out fast.

Certification's nice. Competence pays.

How Hard Is the Informatica Data Quality 10 Developer Specialist Exam?

The Informatica Data Quality 10 Developer Specialist exam sits squarely in the intermediate to advanced certification territory, and honestly, it's not something you can breeze through with just theoretical knowledge. I've seen plenty of developers who thought they could wing it after reading documentation get absolutely humbled by this exam. We're talking people with solid backgrounds who just assumed their general experience would carry them through without putting in the focused work. It's designed to test whether you can actually build and troubleshoot data quality solutions, not just recite definitions.

What separates this from basic certifications

Not your entry-level cert.

The Data Quality 10 Developer Specialist certification demands that you understand how data profiling, cleansing, standardization, and matching work together in real-world scenarios. Not just how they're defined in a glossary or described in marketing materials. You're expected to know the Informatica Developer tool inside and out, including how to design IDQ mappings, configure transformation logic, and debug when things go sideways. Compared to something like the PowerCenter Data Integration 9.x:Developer Specialist, this exam is more specialized and focuses heavily on the details of data quality workflows rather than general ETL concepts.

The exam targets developers who've actually built data quality solutions. Not people who've watched a few videos.

Breaking down the actual difficulty

Unofficial pass rate estimates hover around 60-70% for first-time test takers, which tells you something right there. That means roughly one in three people walk out without passing, and these aren't necessarily unprepared candidates we're talking about. The folks who fail usually fall into predictable categories: they underestimated the hands-on component, relied too heavily on brain dumps instead of actual practice, or thought their general Informatica experience would carry them through.

Look, if you're coming from a PowerCenter background? You'll recognize some concepts. But Data Quality transformations like Parser, Standardizer, Match, and Consolidation have their own quirks and configuration parameters that you absolutely need to master. Surface-level understanding won't cut it when you're staring at a scenario-based question that asks you to identify why a matching strategy is producing duplicate records or how to optimize a complex cleansing workflow.

Why people struggle with this thing

The exam tests practical application in ways that catch people off guard. You'll get multi-step problems that require understanding the entire data quality validation and exception handling workflow from profiling through final output. The thing is, these questions don't just test one concept at a time, they expect you to connect multiple pieces of knowledge at once. One question might present a business requirement, show you a partially configured mapping, and ask what's missing or misconfigured. Another might describe performance issues and expect you to know which tuning technique applies.

Troubleshooting questions? Brutal.

You need to identify root causes of mapping failures, explain why results don't match expectations, or diagnose performance bottlenecks. These aren't simple "what does this button do" questions. They require you to think through the logic of how IDQ mappings, rules, and scorecards interact.

The integration scenarios throw another wrench in the works, testing whether you understand how Data Quality integrates with other Informatica products and external systems. And configuration details? You better know specific parameter settings, not just "yeah, there's a setting for that somewhere."

I once spent an entire afternoon chasing down why a Parser transformation was dropping records, only to discover it was a reference table encoding issue. That kind of troubleshooting shows up on the exam constantly.

The knowledge depth you actually need

Knowing transformation types by name means nothing. You need to understand when to use a Parser versus a Standardizer, how Match transformations calculate scores, and how Consolidation strategies affect your final output. I mean, the exam will present you with business requirements and expect you to architect the solution, not just identify components from a multiple-choice list.

Performance optimization questions require understanding when and how to apply various techniques. Should you use partitioning? Is this a pushdown optimization scenario? What about caching strategies? The exam doesn't just ask if you know these concepts exist. It wants to know if you can apply them correctly given specific constraints.

Data profiling and cleansing in Informatica involves understanding reference data, creating custom rules, and configuring scorecards to measure quality metrics. You'll need hands-on experience with the Developer tool workflows, not just reading about them. I'm talking actual time spent building mappings, running profiles, analyzing results, and iterating on your approach.

Comparing it to related certifications

The Data Quality 9.x Developer Specialist covers similar territory but with version differences that matter. Honestly, don't make the mistake of thinking version numbers are just cosmetic updates. If you're studying for version 10, don't assume the 9.x materials will be sufficient. There are feature updates and interface changes you need to know. The Data Quality 10 Developer Specialist exam is definitely more specialized than general PowerCenter certifications, requiring deeper knowledge in a narrower domain.

Not gonna lie? Some people find this specialization easier because they can focus their study efforts. Easier to go deep on one thing than shallow on ten. Others find it harder precisely because you can't rely on broad Informatica knowledge to compensate for gaps in specific areas.

Study preparation reality check

Your background dramatically influences how hard this exam feels. Like, the difference between someone with production experience and someone with just classroom training is night and day. If you've built production IDQ solutions for six months or more, you're in decent shape. You'll still need to study the official Informatica Data Quality 10 exam objectives and fill knowledge gaps, but the scenario questions won't feel alien.

If you're transitioning from other Informatica products or data quality tools, plan for serious study time. You'll need access to the Developer tool for hands-on practice. Reading alone won't prepare you for questions about specific dialog boxes, workflow steps, or error messages. The Data Quality 10: Developer Specialist Exam resources can help, but they supplement hands-on work rather than replace it.

Factor in at least 40-60 hours of focused preparation if you're experienced, potentially double that if you're newer to data quality concepts. The Informatica Data Quality 10 study guide materials and practice datasets should become your constant companions. Work through complete scenarios: profile data, identify quality issues, design cleansing rules, configure matching strategies, validate results, tune performance.

The scenario-based question trap

Here's what trips people up most. You'll get questions that describe a business problem, show you partial implementation, and ask what's wrong or what's needed next, which requires understanding the complete workflow rather than isolated facts. You might need to mentally trace data through Parser, Standardizer, Match, and Consolidation transformations while considering how exception handling routes bad records and how scorecards measure the results.

Some questions present performance problems and expect you to diagnose root causes. Is it a data skew issue? Poor partitioning strategy? Inefficient transformation logic? The exam wants to know if you can think like someone who actually maintains these systems.

Bottom line on difficulty

The Informatica Data Quality 10 Developer Specialist exam deserves respect. It's not impossibly hard, but it's not easy either. It sits in that zone where your success depends heavily on hands-on experience and thorough preparation across all exam objectives, not luck or test-taking tricks. Theoretical knowledge gets you maybe 40% of the way there. The rest comes from actually building solutions, breaking them, fixing them, and understanding why things work the way they do.

Approach it as an intermediate to advanced certification that validates real capability, and prepare accordingly.

Conclusion

Wrapping up your prep work

Look, you can't wing this thing with YouTube videos and skimmed documentation. The Informatica Data Quality 10 Developer Specialist exam? It's serious business. I mean, it really digs into whether you actually understand data profiling mechanics, how mappings interact with reference tables, and (honestly, this trips people up) when to use which transformation for cleansing versus standardization. They're not interchangeable even though plenty of folks treat them that way. The passing score sits at 70%. Sounds reasonable, right? Until you're staring at scenario questions about exception handling workflows that branch in three directions and you're second-guessing everything.

Most people spend 4-6 weeks preparing. That's with hands-on IDQ experience. Less than that? You're looking at 8+ weeks, honestly. The exam objectives cover everything from basic data quality concepts to performance tuning strategies, and the thing is, Informatica doesn't mess around with surface-level questions. You need to know why a scorecard configuration fails. Not just that it can.

Exam cost runs about $150 USD. Not cheap, but also not outrageous compared to other vendor certs I guess. Retakes cost the same though, so there's real incentive to pass first time. What helps is the Informatica certification renewal policy. It gives you a decent window before you need to recertify, usually tied to major version releases.

Your next move matters

Here's what I'd do starting today.

Work through actual IDQ mappings in the Developer tool. Not just reading about them. Build rules that handle messy address data, the kind of stuff you'd actually encounter in production. Set up scorecards that monitor real quality metrics. The hands-on stuff sticks way better than memorizing documentation, and I can't stress that enough because I've seen people fail who knew theory inside-out but couldn't work through the actual interface when it counted. My buddy spent three weeks on theory, bombed his first attempt, then rebuilt his whole study approach around labs. Passed second time with an 84%. Right, so practical application matters more than you think.

For practice questions that actually mirror what you'll see on test day, the Data Quality 10 Developer Specialist Practice Exam Questions Pack at /informatica-dumps/data-quality-10-developer-specialist/ gives you scenario-based questions that test applied knowledge, not just definitions. I'm not gonna lie. Practice tests that focus on troubleshooting workflows and configuration decisions make a huge difference when you're trying to hit that passing threshold.

The certification validates real skills. Employers want this. Data quality validation, exception handling in production environments, profiling strategies that scale. These aren't abstract concepts floating around in white papers. Get certified and you're proving you can implement IDQ solutions that improve data integrity across systems. That's valuable.

Now go put in the work.